Most enterprise AI initiatives don't fail because of the AI. They fail because of the data.

Companies have invested heavily in machine learning, business intelligence, and generative AI - but the operational data that would actually make those investments useful is still trapped inside complex ERP systems, fragmented pipelines, and legacy architectures that were never designed with agents in mind.

That's the problem Incorta and Google Cloud solve together. And as a Google Cloud partner, Incorta is a foundational part of how enterprises get from raw operational data to production-ready AI agents - fast.

Every analytics initiative starts with the same assumption: the data will be there when you need it. In practice, it rarely is.

Traditional ETL processes strip away the transaction-level detail that AI and analytics actually need. ERP databases like SAP and Oracle can contain 10,000+ tables. Reverse-engineering them into usable pipelines takes months—sometimes years. And once you've built those pipelines, they're fragile. A vendor patch or schema change can break the whole thing, and the institutional knowledge required to fix it often lives with just one or two engineers.

The result: data latency measured in hours or days, partial data sets, and AI models that can't act on your most valuable operational information.

Incorta solves this with Direct Data Mapping™ (DDM)—patented technology that connects directly to source systems like Oracle Fusion, SAP, Workday, and Salesforce, ingests data in its native form (3NF), and delivers 100% of it to Google BigQuery without traditional ETL. No deconstructing. No manual transformations. No data loss.

With over 240 pre-built connectors and automated schema detection, Incorta eliminates the engineering bottleneck entirely. What traditionally takes 18–24 months can happen in weeks. Hormel Foods, for example, replaced an entire Oracle data warehouse with an Incorta-to-BigQuery pipeline in just 10 weeks.

Delivering data faster is only part of the story. The bigger shift happening right now is agentic AI - and it requires something fundamentally different from what most data architectures were built to provide.

A dashboard needs aggregated, high-level trends. An AI agent needs something else entirely:

Granular data. Agents need transaction-level detail to analyze exceptions, detect anomalies, and take precise action. Pre-aggregated data makes their reasoning shallow and incomplete.

Real-time access. Whether an agent is reconciling financial entries, processing invoices, or optimizing supply chain flows, it needs to act on current data—not a batch from last night.

Business context. Raw numbers aren't enough. Agents need to understand why an invoice belongs to a vendor, how an expense rolls up into a cost center, how a shipment ties to an order. Traditional architectures flatten or abstract this context away, leaving agents blind to business logic.

Incorta addresses all three with a semantic layer built directly into its platform. When data is ingested via DDM, Incorta automatically captures source relationships and keeps them intact - translating complex ERP data models into logical, business-centric datasets. Business schemas organize these relationships, standardize calculations, and enforce governance, so every downstream consumer—whether a dashboard or an AI agent—is working from the same trusted definitions.

Incorta calls this enterprise truth: the verified, context-rich operational knowledge that accurately represents how your business actually works. It includes live data from ERP, CRM, and HR systems; historical records; business logic; and trusted third-party information: delivered to BigQuery as a continuously updated foundation for intelligent automation.

Once enterprise truth is in BigQuery, Google Gemini Enterprise can do something genuinely powerful with it.

Gemini Enterprise is Google's secure, enterprise-grade environment for building, deploying, and managing customizable AI agents at scale. Using the Agent Development Kit (ADK), developers can define agents that connect to BigQuery, reason over live enterprise data, and take autonomous action.

Here's what that looks like in practice.

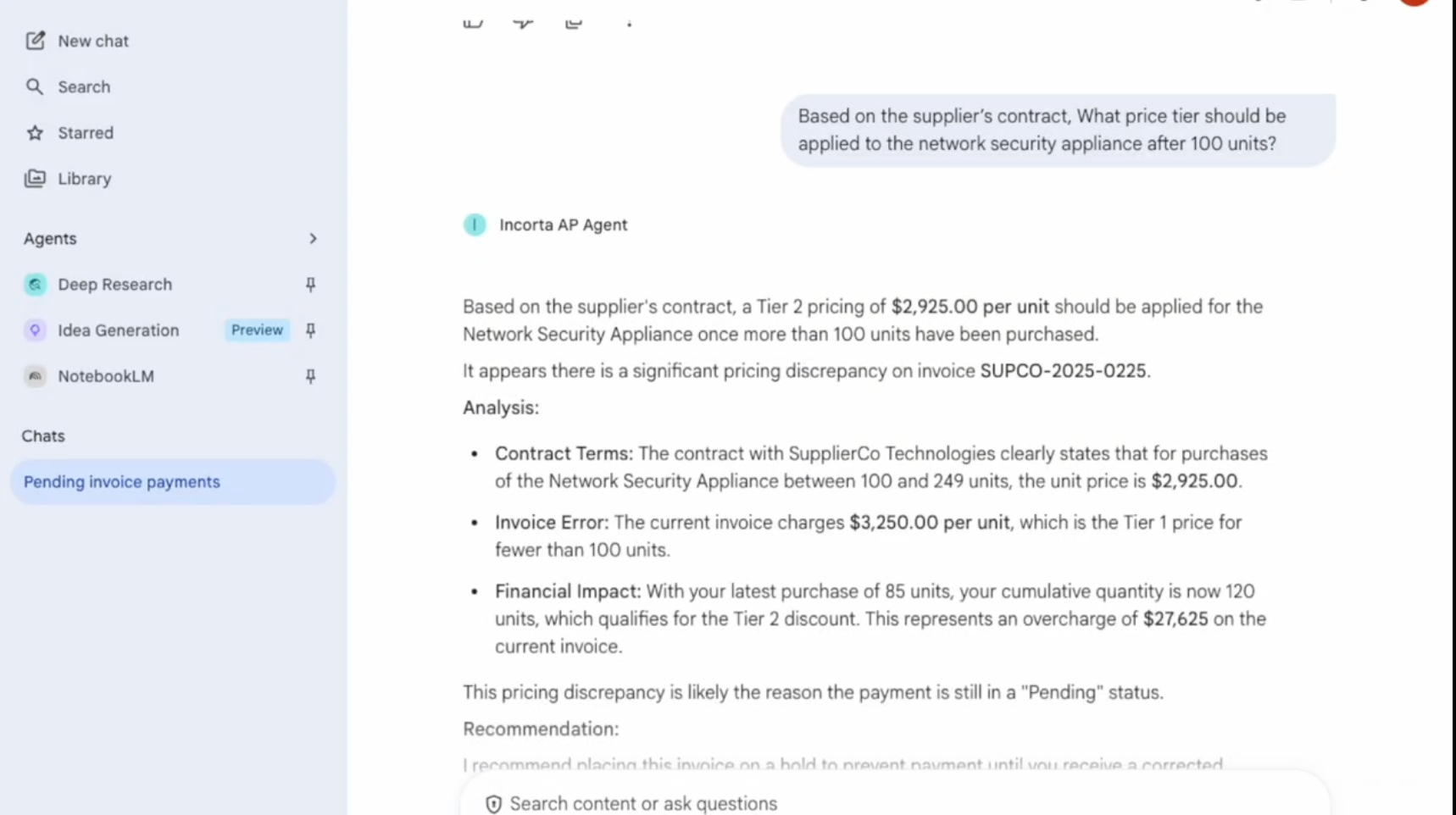

A financial analyst at a large enterprise might spend hours manually reviewing invoices for discrepancies against purchase orders and contracts. With an Incorta-powered Gemini agent, the workflow changes entirely:

The same agent can generate reports, create visualizations, and answer business questions: all from a single interface, grounded in real operational data.

This is what separates production-grade agentic AI from a compelling demo. The intelligence is in the model. The reliability is in the data foundation.

The joint Incorta and Google Cloud solution brings together:

The result is a data pipeline that goes from months to weeks, AI agents grounded in operational reality rather than generic model training, and an analytics architecture that scales across departments without duplicating effort or fragmenting governance.

If your AI initiatives are stalling at the pilot stage, the bottleneck is almost certainly the data - not the model. Incorta and Google Cloud give you a clear, faster path to fixing that.

'Incorta Launches Intelligent Accounts Payable for Google Cloud': read more →

Request a demo →

Summary: Most enterprises building agentic AI on Gemini Enterprise hit the same wall: the model is ready, but the ERP data behind it isn't. Agents pull from stale exports and siloed systems, fill gaps with assumptions, and deliver outputs teams don't trust. The fix is three things most ERP pipelines were never built to deliver: an automated semantic layer, full business context, and live data. Incorta delivers all three to BigQuery automatically, giving Gemini Enterprise the complete, accurate foundation it needs to power production-ready agentic AI on Oracle, SAP, and Workday data.

You've seen what Gemini Enterprise can do. The reasoning is there. The agentic capabilities are there. But when you connect it to your actual ERP data, the outputs don't hold up - and your team doesn't trust them enough to act.

You're not alone. 91% of AI models experience quality degradation over time because of stale, incomplete, or fragmented data. More than 80% of AI projects fail, and the root cause is almost never the model. It's the data foundation behind it.

Right now, most enterprises feed Gemini summaries, old exports, and siloed data that was built for dashboards, not for AI to reason on. When an agent hits a gap, it fills it with an assumption. The output looks confident. The logic underneath it isn't.

That's why only 2% of organizations have implemented AI agents at scale, even though more than 65% are piloting or exploring. The gap between a Gemini pilot that works in a demo and a production deployment your team relies on comes down to one thing: whether your agents have enough context to reason on your data the way your business actually needs them to.

The enterprises that are getting agentic AI into production on Gemini Enterprise have all solved the same foundational problem. They've given their agents three things most ERP pipelines were never built to deliver:

A semantic layer. Your ERP stores data in tables and transaction codes. Gemini needs to understand what that data means in business terms — what a PO status implies, how a GL account maps to a cost center, which inventory fields signal risk. Without a semantic layer, your agents are working with numbers they can't interpret.

Full business context. A single data point means nothing without the relationships around it. A Gemini agent analyzing inventory needs to see the connections across POs, demand forecasts, supplier lead times, and warehouse capacity. Most pipelines deliver flat, summarized data that strips out this context before it ever reaches BigQuery.

Live data. Agents making decisions on data that's hours or days old aren't making real decisions. In supply chain, that's the difference between catching a stock-out early and writing down millions in SLOB inventory. In finance, it's the difference between a real-time variance explanation and a manual reconciliation that takes your team days.

The challenge is that most enterprises spend 80% of their time building data connectors instead of training agents to handle intelligent workflows. Legacy ERPs weren't designed for real-time AI access. They export CSVs, use outdated APIs, and silo critical business data across dozens of modules.

So teams face an impossible choice: limit their Gemini agents to the data that's easy to access and accept incomplete outputs, or spend months building custom pipelines for every ERP system. Most choose the second option and get stuck — McKinsey reports that only 40% of companies see any enterprise-level impact from their AI initiatives.

This is exactly why Incorta and Google Cloud built a better path.

Incorta delivers all three: an automated semantic layer, full business context, and live ERP data - directly to BigQuery. No custom pipelines. No months of implementation. Your Oracle, SAP, and Workday data arrives in BigQuery complete, accurate, and structured for Gemini Enterprise to reason on.

Your agents get the full picture. Your team gets answers they trust enough to act on. And you go from pilot to production without the bottleneck that stalls everyone else.

That's what 100% context and 100% accuracy actually looks like when you're building on Gemini Enterprise.

We'll be at Google Cloud Next, April 22–24 in Las Vegas, Booth #2811. Come see how Incorta automatically generates the business context Gemini Enterprise needs to power production-ready agentic AI on your ERP data.

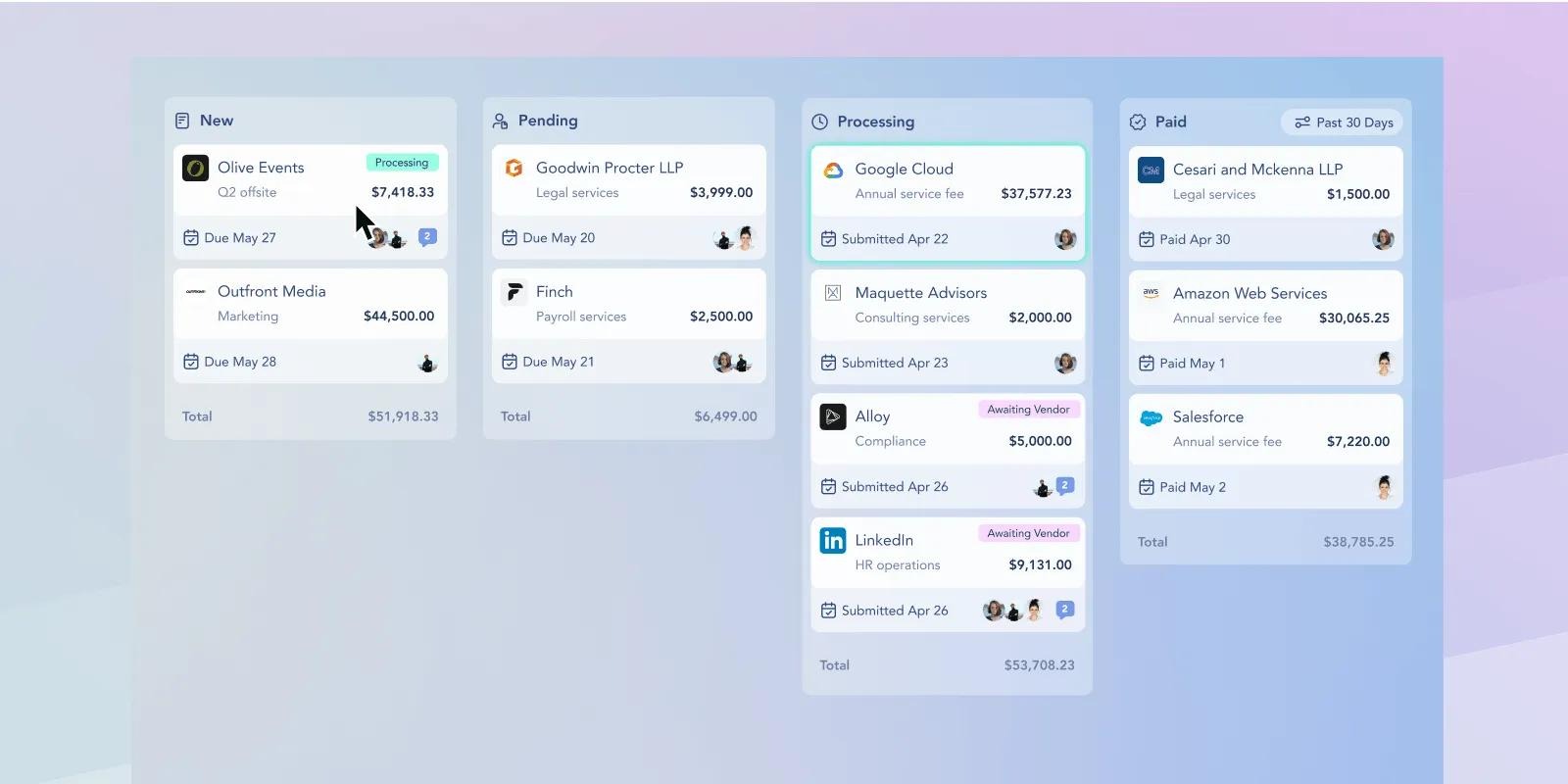

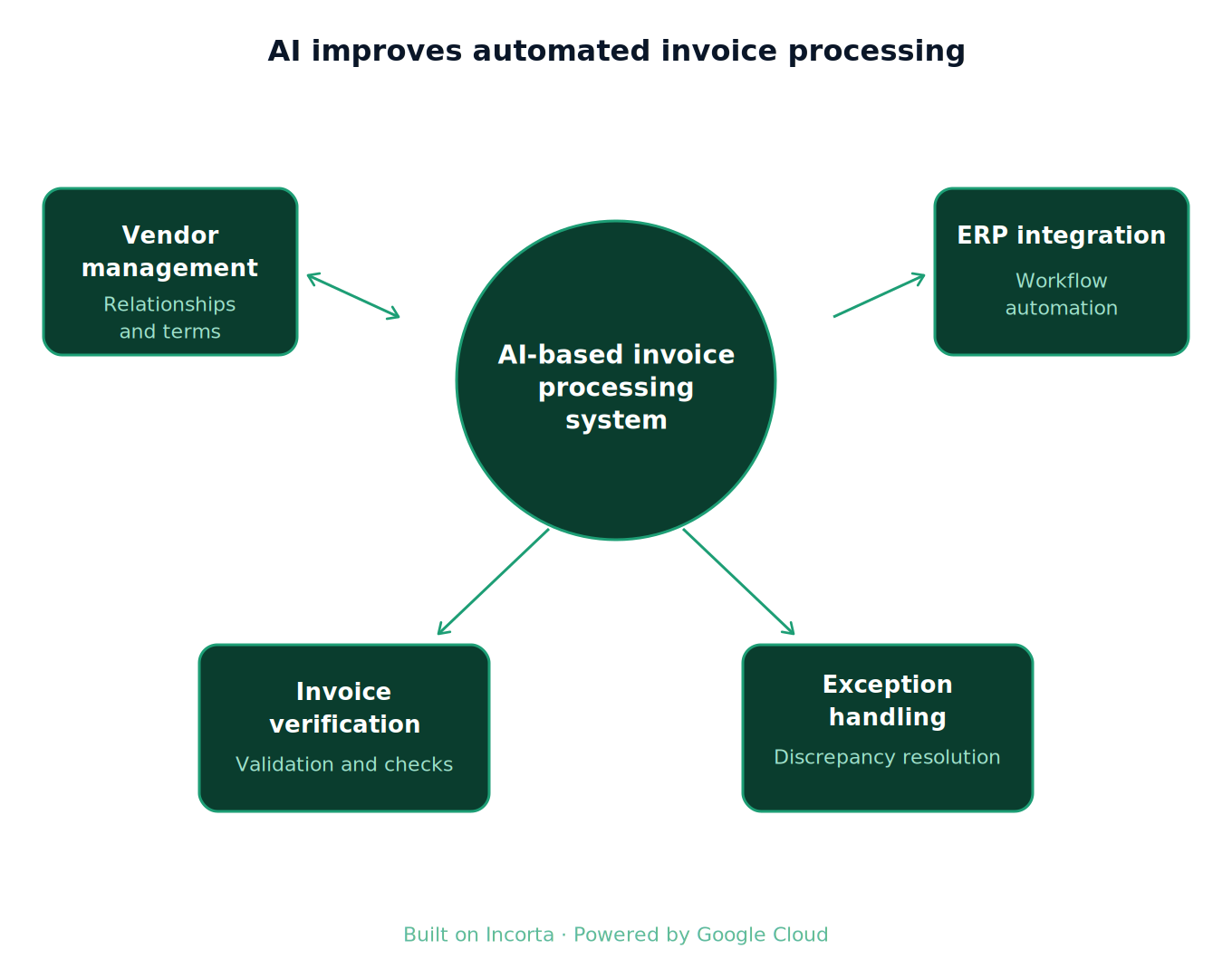

Accounts payable has always been one of the most operationally intensive functions in the enterprise - manual invoice processing, fragmented data, and constant reconciliation cycles. The introduction of the Intelligent AP Agent changes that. Built on Incorta as a data foundation and Google Cloud Agent Builder, it redefines AP as an AI-driven, autonomous system.

This shift is bigger than AP alone: it's the beginning of agentic finance operations across the enterprise.

Most AP teams are working across disconnected ERP, contract, and supplier systems with no unified view of the underlying data. Invoice matching is manual, pricing variances slip through, and by the time an overpayment surfaces, the damage is done.

The root cause lives in that underlying data foundation. When financial data lives in fragmented systems, no workflow can fix what the foundation doesn't support.

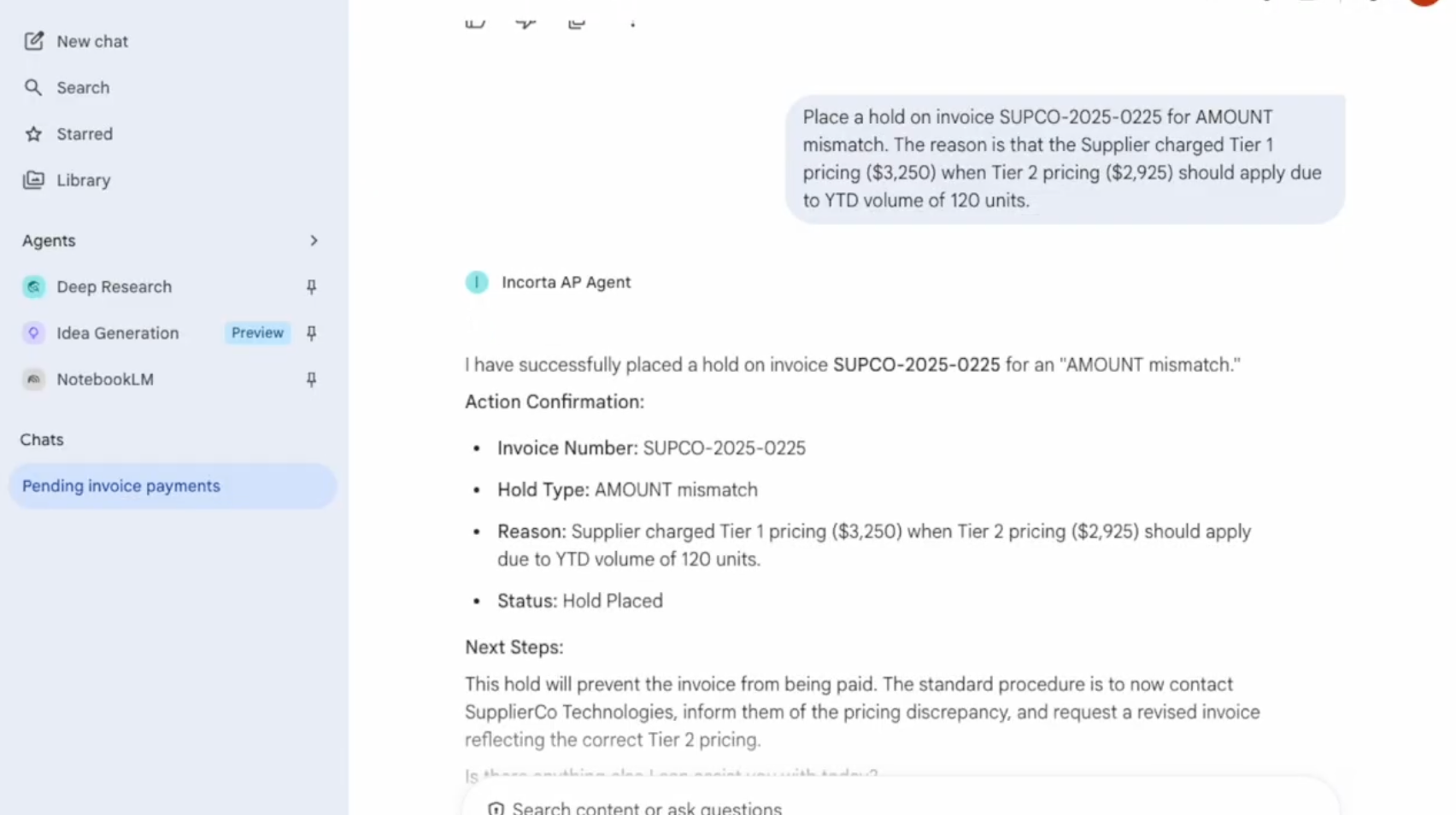

The Incorta AP Agent doesn't stop at moving invoices through a queue. It reads them, validates them against contracts and purchase orders, and acts automatically.

Powered by Gemini Enterprise and Vertex AI, running on top of Incorta's real-time operational data foundation, the agent:

In production deployments, this translates to thousands of dollars recovered per invoice - not from process improvement alone, but from catching what was previously invisible.

What makes this system different is the data layer underneath it. Incorta connects directly to ERP systems like Oracle Fusion: no heavy ETL, no staging tables, no latency between source data and the agent consuming it. When an invoice comes in, the agent is working with the same data that lives in the system of record, not a copy from last night's batch.

The stack looks like this:

Decisions happen in the same motion as data arrival.

The most significant piece of this architecture is how the agents communicate.

The A2A (Agent-to-Agent) protocol, developed through the Incorta and Google Cloud partnership and introduced at Google Cloud Next, establishes a standard for agents to share context and coordinate decisions. When the AP Agent flags a pricing mismatch, it doesn't just log a ticket. It sends a structured request to a contract validation agent, passes the relevant invoice and supplier terms as context, receives a response, and acts - all without a human in the loop.

This matters because most enterprise AI deployments today are isolated: one agent, one system, one task. A2A turns those isolated agents into a network: Finance agents talk to procurement agents, procurement agents talk to compliance agents. The AP Agent becomes a participant in a broader system that can handle complexity that no single workflow could.

Organizations running the Incorta AP Agent can expect:

The bigger shift is structural. Finance has always been a lagging indicator - you found out what happened after it happened. An agentic AP system makes finance a real-time function, and lets decisions happen the second data arrives.

That's the transition underway: systems of record becoming systems of action, analytics giving way to autonomy, and users handing off to agents.

The architecture behind the Intelligent AP Agent is a template for that transition - Incorta and Google Cloud are building the infrastructure that makes it possible.

To learn more, visit incorta.com.

Summary: Incorta solves one of the most common blockers to enterprise AI: getting clean, complete, AI-ready data out of complex ERP systems like Oracle, SAP, Workday, and NetSuite. Where custom-built pipelines typically take 18 to 24 months to deliver reliable data and absorb 75 to 80% of ongoing maintenance costs, Incorta compresses time-to-value from years to weeks using prebuilt ERP blueprints and automated data modeling. Incorta preserves full transaction-level granularity, native relational context across ERP objects, and supports near real-time ingestion, giving AI agents the data quality they need to reason, automate, and act. For data and AI leaders evaluating build vs. buy for ERP-driven AI strategies, Incorta eliminates the engineering burden of building and maintaining custom ERP pipelines and converts unpredictable infrastructure spend into a predictable subscription model.

Your AI Strategy has a data problem - your ERP is at the center of it

AI initiatives stall for plenty of reasons. Budgets get approved, use cases get mapped, stakeholder buy-in gets secured - then the data isn't ready. That's the conversation happening in data and IT leadership teams across every industry right now, and it almost always traces back to ERP systems.

Why ERP data is so hard to work with

ERP platforms hold the most valuable operational data in the enterprise: purchase orders, invoices, vendor records, general ledger entries, inventory movements. For AI to reason and automate, it needs this data granular, current, and contextually intact.

ERP systems were built to run operations, not to feed AI. Their schemas are sprawling (often tens of thousands of tables), relationships are complex and frequently undocumented, and many platforms include structural quirks like tables without primary keys that complicate replication and modeling. Getting clean, AI-ready data out of an ERP system is a multi-year undertaking for most organizations, and that timeline is incompatible with most AI roadmaps.

Why custom ERP pipeline builds fail

Time to value is longer than expected. Internal ERP data builds typically require 18 to 24 months before the business has reliable, AI-ready data. Every quarter spent building infrastructure is a quarter without AI value.

Total cost of ownership escalates. Custom builds absorb 75 to 80% of ongoing maintenance burden, including patches, updates, and accumulated technical debt. As AI scales, demands on the data layer grow: more volume, faster refresh cycles, more governance requirements. What starts as a bounded engineering project becomes an OPEX-heavy liability.

ERP pipelines require domain expertise, not just engineering. Understanding which tables matter, how business rules are embedded, and how cross-module dependencies behave takes years to develop. Pipelines built without that knowledge look correct and break in production.

Technical debt accumulates into AI risk. McKinsey estimates a 10 to 20% tech debt tax on engineering projects, diverting innovation budgets to remediation. Gartner puts the average annual cost of poor data quality at $12.9 million. Unstable pipelines produce unreliable AI outputs, and unreliable outputs kill adoption.

What AI actually needs from your ERP data

AI has three non-negotiable data requirements.

Granularity. AI models need full transaction-level detail. Pre-aggregated data reduces the signal AI needs to find patterns, anomalies, and causal relationships.

Timeliness. Agentic AI requires near real-time data. Batch pipelines with multi-hour latency make alert-triggering, approval automation, and exception flagging impossible.

Context. ERP data only makes sense relationally. An invoice needs its purchase order, receipt record, vendor history, and GL posting to be meaningful. AI needs that relational structure (PO to Receipt to Invoice to Vendor to GL) preserved, not flattened.

Custom builds routinely compromise on at least one of these. Data gets summarized to reduce complexity. Refresh cycles get extended to manage engineering load. Relationships get lost in transformation.

What a purpose-built platform can change

Purpose-built ERP data platforms address all three requirements by design. They ship with prebuilt blueprints for major ERP systems including Oracle, SAP, Workday, and NetSuite, encoding domain expertise that would otherwise take years to develop internally. They preserve native relationships and business logic without manual reconstruction and support near real-time ingestion.

On the cost side, buying converts unpredictable engineering spend into a predictable subscription. ERP schema changes, performance tuning, security patches, and infrastructure evolution become the vendor's responsibility.

The data on this is clear. IDC reports that 80% of data professional time is still spent on discovery and preparation rather than analytics. IDC Qlik research finds 81% of companies struggle with AI data quality in ways that directly undermine ROI. Gartner predicts over 40% of agentic AI projects will be canceled by 2027 because operational and data foundations were not ready.

Data leaders: build vs buy?

Build vs. buy is no longer a tooling conversation. It is a strategic decision with real timing consequences. AI value does not accrue while you are still building infrastructure. Organizations moving fastest on AI are not the ones with the most sophisticated in-house data engineering. They are the ones operating on a data foundation built for this moment.

Download our whitepaper, The AI Data Foundation Dilemma: Build vs. Buy for ERP-Driven AI Strategies, for the complete five-dimension analysis.

Summary: Learn how Incorta gets your finance and operations teams that use Oracle BI tools a path to faster insights: all with less IT dependency, and the ability to answer granular questions - like line-item audit traces or detailed inventory movements - that would require major semantic layer work in OBIEE or OAC.

What Are Oracle BI Tools?

Oracle BI tools are a suite of software products designed to help organizations collect, analyze, and act on business data. The core of Oracle's BI portfolio has historically been OBIEE (Oracle Business Intelligence Enterprise Edition), an on-premises platform for enterprise reporting, dashboards, and analytics.

Oracle has since expanded the suite, and its current primary offerings include:

Oracle Analytics Cloud (OAC): Oracle's cloud-native analytics platform and the strategic successor to on-premises OBIEE. OAC provides self-service analytics, AI-driven insights, and visualization capabilities in a SaaS model.

Oracle Analytics Server (OAS): The on-premises version of Oracle Analytics for organizations not ready to move to the cloud.

Oracle BI Publisher: A structured reporting and document generation tool for pixel-perfect formatted outputs.

Oracle BI Applications (OBIA): Prebuilt analytics content packages with domain-specific dashboards and KPIs for Finance, HR, Supply Chain, and other functions.

Benefits of Oracle BI Tools for Enterprise Analytics

Deep Oracle ecosystem integration: Oracle BI tools are purpose-built to connect with Oracle ERP, Oracle Fusion Applications, Oracle E-Business Suite, JD Edwards, PeopleSoft, and Oracle Database. For organizations heavily invested in the Oracle stack, this integration reduces complexity and accelerates time to first report.

Governed, consistent metrics: The semantic layer (RPD in OBIEE, data models in OAC) enforces consistent business definitions across all users. This is critical for large enterprises where Finance, Operations, and Sales need to work from the same version of core metrics.

Enterprise-scale architecture: Oracle BI tools are designed for high-volume, multi-user environments. They support row-level security, role-based access controls, and complex, multi-source data environments.

Prebuilt content via OBIA: For Oracle ERP customers, Oracle BI Applications provide a head start — pre-configured data pipelines, subject areas, and dashboards aligned to Oracle's source data models.

Formatted reporting: Oracle BI Publisher handles structured document generation that most visualization tools cannot. Financial close packages, regulatory reports, and audit-ready outputs require this level of layout control.

Limitations to Consider

Despite their strengths, Oracle BI tools come with well-known trade-offs. The semantic layer requires significant upfront investment and ongoing maintenance. Adding new data sources or metrics typically requires IT or BI administrator involvement. Performance on highly granular queries can degrade without proper aggregation design. And for organizations not standardized on Oracle infrastructure, the tools provide limited value compared to more platform-agnostic alternatives.

How Incorta Extends and Modernizes Oracle BI

Incorta is designed as a complement and upgrade path for organizations running Oracle BI tools. While Oracle BI delivers strong governance and Oracle-native integration, Incorta removes the performance and agility constraints that come with traditional BI architectures.

Incorta's Direct Data Mapping technology connects directly to Oracle ERP, Oracle Fusion, and Oracle databases — the same sources Oracle BI tools rely on — and makes that data available for analysis without ETL pipelines or pre-aggregated data models. The result is query performance against full granularity data that Oracle BI's aggregation-dependent architecture cannot match.

For finance and operations teams that use Oracle BI tools today, Incorta offers a path to faster insights, less IT dependency, and the ability to answer granular questions — like line-item audit traces or detailed inventory movements — that would require major semantic layer work in OBIEE or OAC.

Also known as: oracle bi | oracle bi obiee | oracle obi | oracle bi tools | oracle business intelligence tools | oracle business intelligence | What are the benefits of using Oracle BI tools for enterprise analytics?

Summary: Learn how to get started with OBIEE reporting, and how Incorta solves common challenges with OBIEE reporting. Where OBIEE requires pre-built semantic layers and aggregation tables to deliver acceptable performance, Incorta's Direct Data Mapping™ technology queries source data directly — at full granularity — without ETL or pre-aggregation.

What Is OBIEE Reporting?

OBIEE (Oracle Business Intelligence Enterprise Edition) reporting refers to the full range of analytical outputs the platform supports — from interactive dashboards and ad hoc queries to scheduled pixel-perfect reports and threshold-based alerts.

OBIEE reporting is designed for enterprise environments with large, complex data sources. It abstracts database complexity through a semantic layer, giving business users access to data using business terminology rather than raw SQL or table names.

Types of Reports in OBIEE

OBIEE supports several distinct report types, each serving a different use case.

Interactive analyses: Built through Oracle BI Answers, these are user-created analyses that combine data columns, filters, and visualizations. Users choose from tables, bar charts, pie charts, pivot tables, and more. Analyses can be saved and shared, or embedded in dashboards.

Dashboards: Collections of analyses arranged on pages. Dashboards support dynamic filtering through prompts and can be personalized by user or role. They are the most common way business users interact with OBIEE data daily.

BI Publisher reports: Structured, formatted reports with precise layouts. Used for financial statements, invoices, regulatory outputs, and other documents that require consistent formatting. BI Publisher reports can be scheduled and delivered via email, printed, or exported to PDF and Excel.

Alerts via BI Delivers: Condition-based notifications that trigger when data meets defined thresholds. For example, an alert when revenue falls below a target or when a supply chain metric exceeds an acceptable range.

How OBIEE Reporting Works

The foundation of all OBIEE reporting is the Oracle BI Repository (RPD) — the platform's semantic layer. The RPD maps physical database tables to logical business objects, defines metrics and hierarchies, and controls what users can see and query.

When a user builds a report in OBIEE, they are selecting from the business-friendly objects exposed by the RPD. OBIEE translates those selections into SQL queries against the underlying database, applies any necessary aggregations, and returns results to the user interface.

This architecture ensures consistency: every user reporting on 'Revenue' is working from the same definition, regardless of which dashboard or analysis they are using.

Getting Started with OBIEE Reporting

Access the Oracle BI web interface: OBIEE is browser-based. Your organization's BI team will provide the URL and your login credentials.

Understand the catalog: The BI Catalog stores all saved analyses, dashboards, and reports. Familiarize yourself with the folder structure to find existing reports and understand what is already built.

Use Oracle BI Answers for ad hoc analysis: Navigate to New > Analysis. Select a subject area (a business domain defined in the RPD), then drag columns into your analysis. Apply filters, choose a visualization, and save your work.

Build or access dashboards: Dashboards are assembled from saved analyses. If you have edit permissions, you can create new dashboard pages and add analyses, text, and images.

Work with BI Publisher for formatted reports: BI Publisher has a separate interface. Report templates are built using RTF, PDF, or Excel templates, then connected to data models. Most formatted reports in OBIEE are maintained by BI administrators and IT.

Understand the RPD dependency: If you cannot find the data you need for an analysis, it likely means the subject area or metric has not been defined in the RPD. Requesting new data requires involving your BI team to update the semantic layer.

Common Challenges with OBIEE Reporting

OBIEE reporting is powerful but comes with real limitations. The RPD creates a bottleneck: every new analysis or data point that falls outside the existing semantic model requires IT involvement. Performance on large, granular datasets can degrade without proper aggregation tables. And the platform's age means it lacks the modern UX and self-service flexibility that newer BI tools offer.

How Incorta Addresses OBIEE Reporting Limitations

Incorta was built to solve exactly the problems OBIEE reporting creates. Where OBIEE requires pre-built semantic layers and aggregation tables to deliver acceptable performance, Incorta's Direct Data Mapping™ technology queries source data directly — at full granularity — without ETL or pre-aggregation.

For finance and operations teams that rely on OBIEE for reporting, Incorta delivers the same governed, consistent experience with dramatically faster performance and no semantic layer bottleneck. Users can build analyses against live transactional data — drilling from a monthly P&L down to individual journal entries — without waiting for a BI team to update the RPD.

Incorta's native connectors to Oracle ERP, Oracle Fusion, and Oracle databases mean migration from OBIEE reporting to Incorta is straightforward. You keep the data quality and governance your team depends on. You eliminate the performance and agility constraints that slow analysis down.

Also known as: OBIEE reports | OBIEE reporting | OBIEE software | How do I get started with OBIEE reporting?

The latest updates and resources from us, directly to your inbox. (No spam, we promise!)