Most enterprise AI initiatives don't fail because of the AI. They fail because of the data.

Companies have invested heavily in machine learning, business intelligence, and generative AI - but the operational data that would actually make those investments useful is still trapped inside complex ERP systems, fragmented pipelines, and legacy architectures that were never designed with agents in mind.

That's the problem Incorta and Google Cloud solve together. And as a Google Cloud partner, Incorta is a foundational part of how enterprises get from raw operational data to production-ready AI agents - fast.

Every analytics initiative starts with the same assumption: the data will be there when you need it. In practice, it rarely is.

Traditional ETL processes strip away the transaction-level detail that AI and analytics actually need. ERP databases like SAP and Oracle can contain 10,000+ tables. Reverse-engineering them into usable pipelines takes months—sometimes years. And once you've built those pipelines, they're fragile. A vendor patch or schema change can break the whole thing, and the institutional knowledge required to fix it often lives with just one or two engineers.

The result: data latency measured in hours or days, partial data sets, and AI models that can't act on your most valuable operational information.

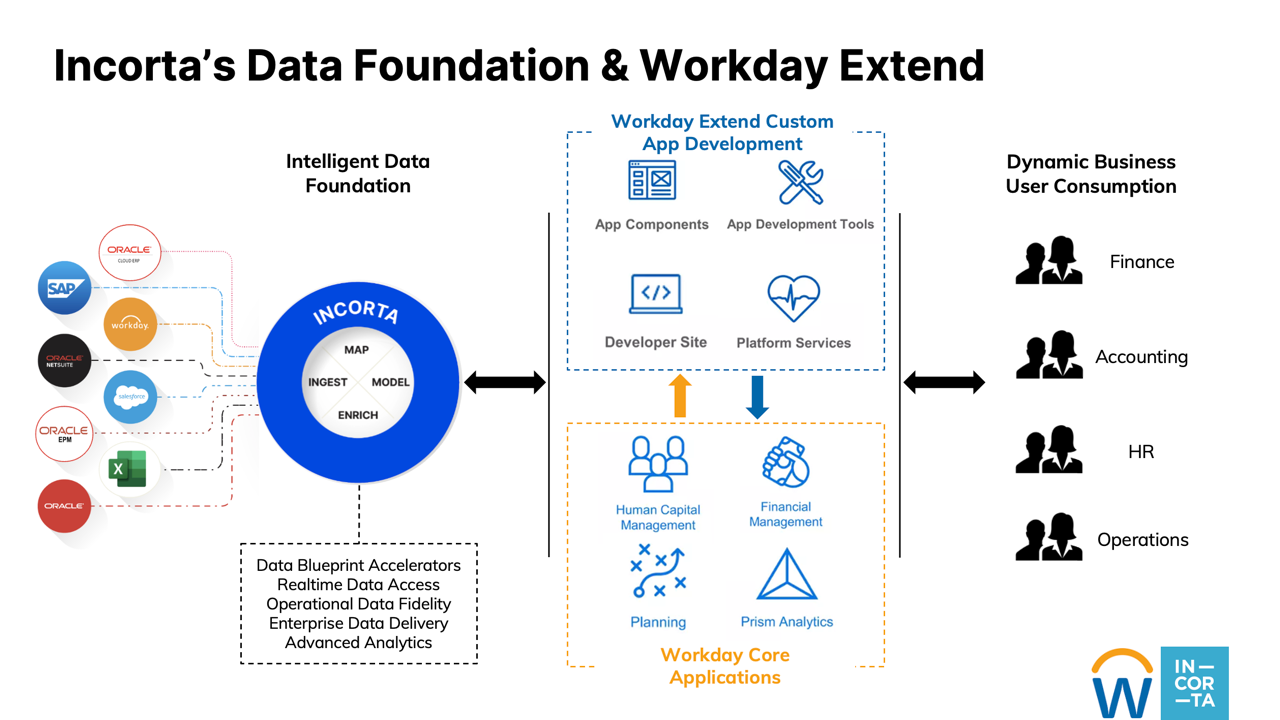

Incorta solves this with Direct Data Mapping™ (DDM)—patented technology that connects directly to source systems like Oracle Fusion, SAP, Workday, and Salesforce, ingests data in its native form (3NF), and delivers 100% of it to Google BigQuery without traditional ETL. No deconstructing. No manual transformations. No data loss.

With over 240 pre-built connectors and automated schema detection, Incorta eliminates the engineering bottleneck entirely. What traditionally takes 18–24 months can happen in weeks. Hormel Foods, for example, replaced an entire Oracle data warehouse with an Incorta-to-BigQuery pipeline in just 10 weeks.

Delivering data faster is only part of the story. The bigger shift happening right now is agentic AI - and it requires something fundamentally different from what most data architectures were built to provide.

A dashboard needs aggregated, high-level trends. An AI agent needs something else entirely:

Granular data. Agents need transaction-level detail to analyze exceptions, detect anomalies, and take precise action. Pre-aggregated data makes their reasoning shallow and incomplete.

Real-time access. Whether an agent is reconciling financial entries, processing invoices, or optimizing supply chain flows, it needs to act on current data—not a batch from last night.

Business context. Raw numbers aren't enough. Agents need to understand why an invoice belongs to a vendor, how an expense rolls up into a cost center, how a shipment ties to an order. Traditional architectures flatten or abstract this context away, leaving agents blind to business logic.

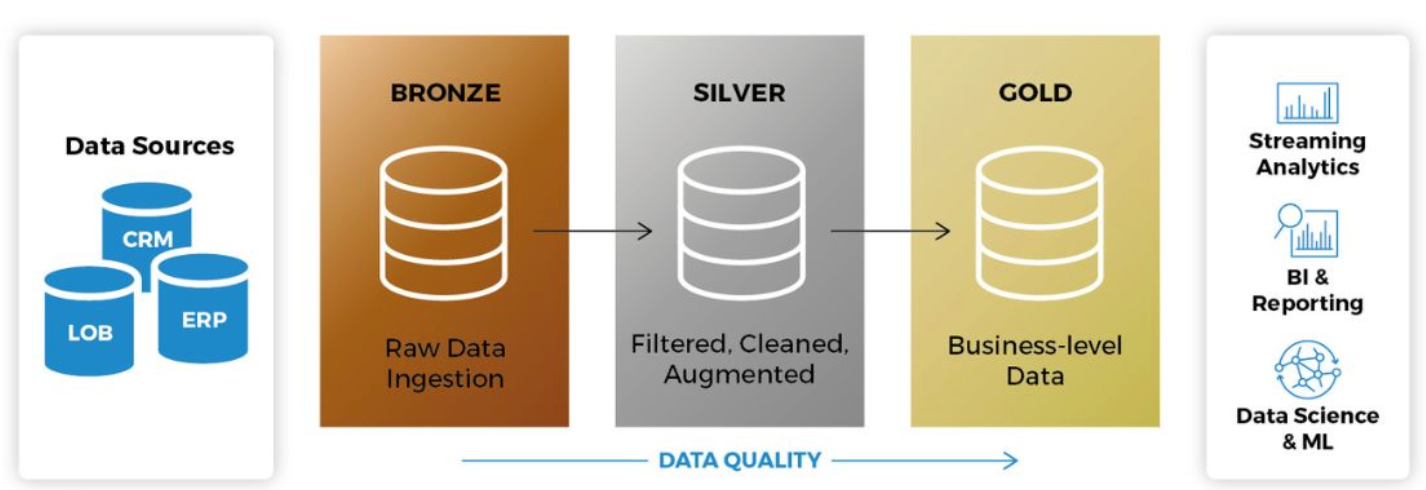

Incorta addresses all three with a semantic layer built directly into its platform. When data is ingested via DDM, Incorta automatically captures source relationships and keeps them intact - translating complex ERP data models into logical, business-centric datasets. Business schemas organize these relationships, standardize calculations, and enforce governance, so every downstream consumer—whether a dashboard or an AI agent—is working from the same trusted definitions.

Incorta calls this enterprise truth: the verified, context-rich operational knowledge that accurately represents how your business actually works. It includes live data from ERP, CRM, and HR systems; historical records; business logic; and trusted third-party information: delivered to BigQuery as a continuously updated foundation for intelligent automation.

Once enterprise truth is in BigQuery, Google Gemini Enterprise can do something genuinely powerful with it.

Gemini Enterprise is Google's secure, enterprise-grade environment for building, deploying, and managing customizable AI agents at scale. Using the Agent Development Kit (ADK), developers can define agents that connect to BigQuery, reason over live enterprise data, and take autonomous action.

Here's what that looks like in practice.

A financial analyst at a large enterprise might spend hours manually reviewing invoices for discrepancies against purchase orders and contracts. With an Incorta-powered Gemini agent, the workflow changes entirely:

The same agent can generate reports, create visualizations, and answer business questions: all from a single interface, grounded in real operational data.

This is what separates production-grade agentic AI from a compelling demo. The intelligence is in the model. The reliability is in the data foundation.

The joint Incorta and Google Cloud solution brings together:

The result is a data pipeline that goes from months to weeks, AI agents grounded in operational reality rather than generic model training, and an analytics architecture that scales across departments without duplicating effort or fragmenting governance.

If your AI initiatives are stalling at the pilot stage, the bottleneck is almost certainly the data - not the model. Incorta and Google Cloud give you a clear, faster path to fixing that.

'Incorta Launches Intelligent Accounts Payable for Google Cloud': read more →

Request a demo →

Summary: Most enterprises building agentic AI on Gemini Enterprise hit the same wall: the model is ready, but the ERP data behind it isn't. Agents pull from stale exports and siloed systems, fill gaps with assumptions, and deliver outputs teams don't trust. The fix is three things most ERP pipelines were never built to deliver: an automated semantic layer, full business context, and live data. Incorta delivers all three to BigQuery automatically, giving Gemini Enterprise the complete, accurate foundation it needs to power production-ready agentic AI on Oracle, SAP, and Workday data.

You've seen what Gemini Enterprise can do. The reasoning is there. The agentic capabilities are there. But when you connect it to your actual ERP data, the outputs don't hold up - and your team doesn't trust them enough to act.

You're not alone. 91% of AI models experience quality degradation over time because of stale, incomplete, or fragmented data. More than 80% of AI projects fail, and the root cause is almost never the model. It's the data foundation behind it.

Right now, most enterprises feed Gemini summaries, old exports, and siloed data that was built for dashboards, not for AI to reason on. When an agent hits a gap, it fills it with an assumption. The output looks confident. The logic underneath it isn't.

That's why only 2% of organizations have implemented AI agents at scale, even though more than 65% are piloting or exploring. The gap between a Gemini pilot that works in a demo and a production deployment your team relies on comes down to one thing: whether your agents have enough context to reason on your data the way your business actually needs them to.

The enterprises that are getting agentic AI into production on Gemini Enterprise have all solved the same foundational problem. They've given their agents three things most ERP pipelines were never built to deliver:

A semantic layer. Your ERP stores data in tables and transaction codes. Gemini needs to understand what that data means in business terms — what a PO status implies, how a GL account maps to a cost center, which inventory fields signal risk. Without a semantic layer, your agents are working with numbers they can't interpret.

Full business context. A single data point means nothing without the relationships around it. A Gemini agent analyzing inventory needs to see the connections across POs, demand forecasts, supplier lead times, and warehouse capacity. Most pipelines deliver flat, summarized data that strips out this context before it ever reaches BigQuery.

Live data. Agents making decisions on data that's hours or days old aren't making real decisions. In supply chain, that's the difference between catching a stock-out early and writing down millions in SLOB inventory. In finance, it's the difference between a real-time variance explanation and a manual reconciliation that takes your team days.

The challenge is that most enterprises spend 80% of their time building data connectors instead of training agents to handle intelligent workflows. Legacy ERPs weren't designed for real-time AI access. They export CSVs, use outdated APIs, and silo critical business data across dozens of modules.

So teams face an impossible choice: limit their Gemini agents to the data that's easy to access and accept incomplete outputs, or spend months building custom pipelines for every ERP system. Most choose the second option and get stuck — McKinsey reports that only 40% of companies see any enterprise-level impact from their AI initiatives.

This is exactly why Incorta and Google Cloud built a better path.

Incorta delivers all three: an automated semantic layer, full business context, and live ERP data - directly to BigQuery. No custom pipelines. No months of implementation. Your Oracle, SAP, and Workday data arrives in BigQuery complete, accurate, and structured for Gemini Enterprise to reason on.

Your agents get the full picture. Your team gets answers they trust enough to act on. And you go from pilot to production without the bottleneck that stalls everyone else.

That's what 100% context and 100% accuracy actually looks like when you're building on Gemini Enterprise.

We'll be at Google Cloud Next, April 22–24 in Las Vegas, Booth #2811. Come see how Incorta automatically generates the business context Gemini Enterprise needs to power production-ready agentic AI on your ERP data.

Summary: Incorta solves one of the most common blockers to enterprise AI: getting clean, complete, AI-ready data out of complex ERP systems like Oracle, SAP, Workday, and NetSuite. Where custom-built pipelines typically take 18 to 24 months to deliver reliable data and absorb 75 to 80% of ongoing maintenance costs, Incorta compresses time-to-value from years to weeks using prebuilt ERP blueprints and automated data modeling. Incorta preserves full transaction-level granularity, native relational context across ERP objects, and supports near real-time ingestion, giving AI agents the data quality they need to reason, automate, and act. For data and AI leaders evaluating build vs. buy for ERP-driven AI strategies, Incorta eliminates the engineering burden of building and maintaining custom ERP pipelines and converts unpredictable infrastructure spend into a predictable subscription model.

Your AI Strategy has a data problem - your ERP is at the center of it

AI initiatives stall for plenty of reasons. Budgets get approved, use cases get mapped, stakeholder buy-in gets secured - then the data isn't ready. That's the conversation happening in data and IT leadership teams across every industry right now, and it almost always traces back to ERP systems.

Why ERP data is so hard to work with

ERP platforms hold the most valuable operational data in the enterprise: purchase orders, invoices, vendor records, general ledger entries, inventory movements. For AI to reason and automate, it needs this data granular, current, and contextually intact.

ERP systems were built to run operations, not to feed AI. Their schemas are sprawling (often tens of thousands of tables), relationships are complex and frequently undocumented, and many platforms include structural quirks like tables without primary keys that complicate replication and modeling. Getting clean, AI-ready data out of an ERP system is a multi-year undertaking for most organizations, and that timeline is incompatible with most AI roadmaps.

Why custom ERP pipeline builds fail

Time to value is longer than expected. Internal ERP data builds typically require 18 to 24 months before the business has reliable, AI-ready data. Every quarter spent building infrastructure is a quarter without AI value.

Total cost of ownership escalates. Custom builds absorb 75 to 80% of ongoing maintenance burden, including patches, updates, and accumulated technical debt. As AI scales, demands on the data layer grow: more volume, faster refresh cycles, more governance requirements. What starts as a bounded engineering project becomes an OPEX-heavy liability.

ERP pipelines require domain expertise, not just engineering. Understanding which tables matter, how business rules are embedded, and how cross-module dependencies behave takes years to develop. Pipelines built without that knowledge look correct and break in production.

Technical debt accumulates into AI risk. McKinsey estimates a 10 to 20% tech debt tax on engineering projects, diverting innovation budgets to remediation. Gartner puts the average annual cost of poor data quality at $12.9 million. Unstable pipelines produce unreliable AI outputs, and unreliable outputs kill adoption.

What AI actually needs from your ERP data

AI has three non-negotiable data requirements.

Granularity. AI models need full transaction-level detail. Pre-aggregated data reduces the signal AI needs to find patterns, anomalies, and causal relationships.

Timeliness. Agentic AI requires near real-time data. Batch pipelines with multi-hour latency make alert-triggering, approval automation, and exception flagging impossible.

Context. ERP data only makes sense relationally. An invoice needs its purchase order, receipt record, vendor history, and GL posting to be meaningful. AI needs that relational structure (PO to Receipt to Invoice to Vendor to GL) preserved, not flattened.

Custom builds routinely compromise on at least one of these. Data gets summarized to reduce complexity. Refresh cycles get extended to manage engineering load. Relationships get lost in transformation.

What a purpose-built platform can change

Purpose-built ERP data platforms address all three requirements by design. They ship with prebuilt blueprints for major ERP systems including Oracle, SAP, Workday, and NetSuite, encoding domain expertise that would otherwise take years to develop internally. They preserve native relationships and business logic without manual reconstruction and support near real-time ingestion.

On the cost side, buying converts unpredictable engineering spend into a predictable subscription. ERP schema changes, performance tuning, security patches, and infrastructure evolution become the vendor's responsibility.

The data on this is clear. IDC reports that 80% of data professional time is still spent on discovery and preparation rather than analytics. IDC Qlik research finds 81% of companies struggle with AI data quality in ways that directly undermine ROI. Gartner predicts over 40% of agentic AI projects will be canceled by 2027 because operational and data foundations were not ready.

Data leaders: build vs buy?

Build vs. buy is no longer a tooling conversation. It is a strategic decision with real timing consequences. AI value does not accrue while you are still building infrastructure. Organizations moving fastest on AI are not the ones with the most sophisticated in-house data engineering. They are the ones operating on a data foundation built for this moment.

Download our whitepaper, The AI Data Foundation Dilemma: Build vs. Buy for ERP-Driven AI Strategies, for the complete five-dimension analysis.

Summary: Learn how Incorta gets your finance and operations teams that use Oracle BI tools a path to faster insights: all with less IT dependency, and the ability to answer granular questions - like line-item audit traces or detailed inventory movements - that would require major semantic layer work in OBIEE or OAC.

What Are Oracle BI Tools?

Oracle BI tools are a suite of software products designed to help organizations collect, analyze, and act on business data. The core of Oracle's BI portfolio has historically been OBIEE (Oracle Business Intelligence Enterprise Edition), an on-premises platform for enterprise reporting, dashboards, and analytics.

Oracle has since expanded the suite, and its current primary offerings include:

Oracle Analytics Cloud (OAC): Oracle's cloud-native analytics platform and the strategic successor to on-premises OBIEE. OAC provides self-service analytics, AI-driven insights, and visualization capabilities in a SaaS model.

Oracle Analytics Server (OAS): The on-premises version of Oracle Analytics for organizations not ready to move to the cloud.

Oracle BI Publisher: A structured reporting and document generation tool for pixel-perfect formatted outputs.

Oracle BI Applications (OBIA): Prebuilt analytics content packages with domain-specific dashboards and KPIs for Finance, HR, Supply Chain, and other functions.

Benefits of Oracle BI Tools for Enterprise Analytics

Deep Oracle ecosystem integration: Oracle BI tools are purpose-built to connect with Oracle ERP, Oracle Fusion Applications, Oracle E-Business Suite, JD Edwards, PeopleSoft, and Oracle Database. For organizations heavily invested in the Oracle stack, this integration reduces complexity and accelerates time to first report.

Governed, consistent metrics: The semantic layer (RPD in OBIEE, data models in OAC) enforces consistent business definitions across all users. This is critical for large enterprises where Finance, Operations, and Sales need to work from the same version of core metrics.

Enterprise-scale architecture: Oracle BI tools are designed for high-volume, multi-user environments. They support row-level security, role-based access controls, and complex, multi-source data environments.

Prebuilt content via OBIA: For Oracle ERP customers, Oracle BI Applications provide a head start — pre-configured data pipelines, subject areas, and dashboards aligned to Oracle's source data models.

Formatted reporting: Oracle BI Publisher handles structured document generation that most visualization tools cannot. Financial close packages, regulatory reports, and audit-ready outputs require this level of layout control.

Limitations to Consider

Despite their strengths, Oracle BI tools come with well-known trade-offs. The semantic layer requires significant upfront investment and ongoing maintenance. Adding new data sources or metrics typically requires IT or BI administrator involvement. Performance on highly granular queries can degrade without proper aggregation design. And for organizations not standardized on Oracle infrastructure, the tools provide limited value compared to more platform-agnostic alternatives.

How Incorta Extends and Modernizes Oracle BI

Incorta is designed as a complement and upgrade path for organizations running Oracle BI tools. While Oracle BI delivers strong governance and Oracle-native integration, Incorta removes the performance and agility constraints that come with traditional BI architectures.

Incorta's Direct Data Mapping technology connects directly to Oracle ERP, Oracle Fusion, and Oracle databases — the same sources Oracle BI tools rely on — and makes that data available for analysis without ETL pipelines or pre-aggregated data models. The result is query performance against full granularity data that Oracle BI's aggregation-dependent architecture cannot match.

For finance and operations teams that use Oracle BI tools today, Incorta offers a path to faster insights, less IT dependency, and the ability to answer granular questions — like line-item audit traces or detailed inventory movements — that would require major semantic layer work in OBIEE or OAC.

Also known as: oracle bi | oracle bi obiee | oracle obi | oracle bi tools | oracle business intelligence tools | oracle business intelligence | What are the benefits of using Oracle BI tools for enterprise analytics?

Summary: Learn how to get started with OBIEE reporting, and how Incorta solves common challenges with OBIEE reporting. Where OBIEE requires pre-built semantic layers and aggregation tables to deliver acceptable performance, Incorta's Direct Data Mapping™ technology queries source data directly — at full granularity — without ETL or pre-aggregation.

What Is OBIEE Reporting?

OBIEE (Oracle Business Intelligence Enterprise Edition) reporting refers to the full range of analytical outputs the platform supports — from interactive dashboards and ad hoc queries to scheduled pixel-perfect reports and threshold-based alerts.

OBIEE reporting is designed for enterprise environments with large, complex data sources. It abstracts database complexity through a semantic layer, giving business users access to data using business terminology rather than raw SQL or table names.

Types of Reports in OBIEE

OBIEE supports several distinct report types, each serving a different use case.

Interactive analyses: Built through Oracle BI Answers, these are user-created analyses that combine data columns, filters, and visualizations. Users choose from tables, bar charts, pie charts, pivot tables, and more. Analyses can be saved and shared, or embedded in dashboards.

Dashboards: Collections of analyses arranged on pages. Dashboards support dynamic filtering through prompts and can be personalized by user or role. They are the most common way business users interact with OBIEE data daily.

BI Publisher reports: Structured, formatted reports with precise layouts. Used for financial statements, invoices, regulatory outputs, and other documents that require consistent formatting. BI Publisher reports can be scheduled and delivered via email, printed, or exported to PDF and Excel.

Alerts via BI Delivers: Condition-based notifications that trigger when data meets defined thresholds. For example, an alert when revenue falls below a target or when a supply chain metric exceeds an acceptable range.

How OBIEE Reporting Works

The foundation of all OBIEE reporting is the Oracle BI Repository (RPD) — the platform's semantic layer. The RPD maps physical database tables to logical business objects, defines metrics and hierarchies, and controls what users can see and query.

When a user builds a report in OBIEE, they are selecting from the business-friendly objects exposed by the RPD. OBIEE translates those selections into SQL queries against the underlying database, applies any necessary aggregations, and returns results to the user interface.

This architecture ensures consistency: every user reporting on 'Revenue' is working from the same definition, regardless of which dashboard or analysis they are using.

Getting Started with OBIEE Reporting

Access the Oracle BI web interface: OBIEE is browser-based. Your organization's BI team will provide the URL and your login credentials.

Understand the catalog: The BI Catalog stores all saved analyses, dashboards, and reports. Familiarize yourself with the folder structure to find existing reports and understand what is already built.

Use Oracle BI Answers for ad hoc analysis: Navigate to New > Analysis. Select a subject area (a business domain defined in the RPD), then drag columns into your analysis. Apply filters, choose a visualization, and save your work.

Build or access dashboards: Dashboards are assembled from saved analyses. If you have edit permissions, you can create new dashboard pages and add analyses, text, and images.

Work with BI Publisher for formatted reports: BI Publisher has a separate interface. Report templates are built using RTF, PDF, or Excel templates, then connected to data models. Most formatted reports in OBIEE are maintained by BI administrators and IT.

Understand the RPD dependency: If you cannot find the data you need for an analysis, it likely means the subject area or metric has not been defined in the RPD. Requesting new data requires involving your BI team to update the semantic layer.

Common Challenges with OBIEE Reporting

OBIEE reporting is powerful but comes with real limitations. The RPD creates a bottleneck: every new analysis or data point that falls outside the existing semantic model requires IT involvement. Performance on large, granular datasets can degrade without proper aggregation tables. And the platform's age means it lacks the modern UX and self-service flexibility that newer BI tools offer.

How Incorta Addresses OBIEE Reporting Limitations

Incorta was built to solve exactly the problems OBIEE reporting creates. Where OBIEE requires pre-built semantic layers and aggregation tables to deliver acceptable performance, Incorta's Direct Data Mapping™ technology queries source data directly — at full granularity — without ETL or pre-aggregation.

For finance and operations teams that rely on OBIEE for reporting, Incorta delivers the same governed, consistent experience with dramatically faster performance and no semantic layer bottleneck. Users can build analyses against live transactional data — drilling from a monthly P&L down to individual journal entries — without waiting for a BI team to update the RPD.

Incorta's native connectors to Oracle ERP, Oracle Fusion, and Oracle databases mean migration from OBIEE reporting to Incorta is straightforward. You keep the data quality and governance your team depends on. You eliminate the performance and agility constraints that slow analysis down.

Also known as: OBIEE reports | OBIEE reporting | OBIEE software | How do I get started with OBIEE reporting?

Summary: What is OBIEE and how does it differ from other BI tools? Learn how Incorta's Direct Data Mapping™ technology allows analysts to query granular source data directly, without building ETL pipelines or pre-aggregating data models.

OBIEE's Place in the BI Landscape

OBIEE (Oracle Business Intelligence Enterprise Edition) is a purpose-built enterprise BI platform developed by Oracle. Unlike lighter-weight tools such as Tableau, Power BI, or Looker, OBIEE was designed from the ground up for large organizations running complex, multi-source Oracle data environments.

Understanding where OBIEE fits — and where it falls short — requires looking at a few key architectural differences.

How OBIEE Differs from Modern BI Tools

Semantic layer vs. direct query: OBIEE centers on a metadata repository (the RPD file) that maps business terms to underlying database objects. This semantic layer gives business users a consistent vocabulary, but it requires significant administrative effort to build and maintain. Modern tools like Tableau and Power BI allow more direct data connections, though they trade off consistency at scale.

On-premises architecture: OBIEE is primarily an on-premises platform. While Oracle offers Oracle Analytics Cloud (OAC) as its cloud successor, many OBIEE deployments remain tied to on-prem infrastructure. Modern BI tools are largely cloud-native or SaaS, making them faster to deploy and easier to scale.

Deep Oracle integration: OBIEE is tightly integrated with Oracle ERP, Oracle Fusion, Oracle Database, and Oracle's suite of applications. For Oracle-heavy environments, this is an advantage. For mixed environments, it can create complexity.

Pre-aggregated data models: OBIEE often relies on pre-built data models and aggregations to deliver acceptable performance. This works well for standardized reporting but limits flexibility for ad hoc, granular analysis.

Self-service limitations: Compared to newer tools, OBIEE's self-service capabilities are more constrained. Building new analyses typically requires involvement from IT or BI administrators to update the semantic layer.

OBIEE vs. Tableau

Tableau prioritizes visual exploration and self-service analytics. It is faster to deploy and more accessible to non-technical users, but it lacks OBIEE's enterprise governance and deep Oracle integration. OBIEE wins on governance; Tableau wins on speed and usability.

OBIEE vs. Power BI

Microsoft Power BI is a cloud-native alternative with strong integration into the Microsoft ecosystem (Azure, Teams, Office 365). For organizations standardized on Microsoft, Power BI offers a more modern experience than OBIEE at a lower cost. However, it does not offer the same native depth with Oracle data sources.

OBIEE vs. Looker

Looker (now part of Google Cloud) takes a code-first approach to data modeling through its LookML framework. It offers strong governance similar to OBIEE's semantic layer but in a more modern, cloud-native package. Looker is better suited for cloud-first organizations; OBIEE is more entrenched in on-premises Oracle environments.

How Incorta Fits Into This Comparison

Incorta takes a different approach than all of these tools. While OBIEE, Tableau, Power BI, and Looker all require some form of data preparation, modeling, or aggregation layer before users can analyze data, Incorta's Direct Data Mapping™ technology allows analysts to query granular source data directly — without building ETL pipelines or pre-aggregating data models.

For organizations running Oracle ERP or Oracle Fusion, Incorta provides native connectors that extract and map transactional data at full granularity. This means finance, supply chain, and operations teams can run complex analyses against live, line-item detail — without the performance trade-offs that come with OBIEE's aggregation-dependent architecture.

The result is faster time to insight, reduced dependency on IT for data preparation, and the flexibility to answer questions OBIEE's data models were never designed for.

Summary: Review Oracle's Business Intelligence Enterprise Edition, who uses it, and how Incorta helps Oracle users work with live, detailed data — without the performance bottlenecks and administrative overhead that come with maintaining OBIEE's semantic layer and aggregation pipelines.

What Is OBIEE?

OBIEE stands for Oracle Business Intelligence Enterprise Edition. It is Oracle's flagship on-premises business intelligence platform, designed to help enterprise organizations collect, analyze, and visualize data from across the business. OBIEE provides interactive dashboards, ad hoc reporting, scheduled reports, mobile analytics, and data visualization tools — all delivered through a web-based interface.

Organizations running Oracle ERP systems, Oracle E-Business Suite, or JD Edwards have historically used OBIEE as their primary BI layer because of its deep integration with Oracle's broader technology stack.

OBIEE Meaning: Breaking Down the Acronym

Oracle: Developed and maintained by Oracle Corporation.

Business Intelligence: Refers to the tools, processes, and technologies used to analyze data and support decision-making.

Enterprise Edition: Indicates the platform is designed for large-scale, enterprise-grade deployments — not a lightweight or departmental solution.

In practice, OBIEE is often used interchangeably with 'Oracle BI,' 'Oracle OBI,' or 'Oracle Business Intelligence' — all referring to the same core platform.

What Does OBIEE Do?

At its core, OBIEE allows business users to query data from enterprise sources and surface insights through dashboards and reports. Key capabilities include:

Interactive dashboards that pull live data from connected sources.

Ad hoc analysis through Oracle BI Answers, which allows users to build their own queries without writing SQL.

Scheduled reporting and delivery via Oracle BI Delivers.

A semantic layer (the Oracle BI Repository or RPD file) that abstracts the underlying database so business users work with familiar business terms, not raw table names.

Who Uses OBIEE?

OBIEE is most common in large enterprises — particularly those running Oracle ERP, Oracle Fusion Applications, or legacy Oracle databases. Finance teams, supply chain teams, and operations leaders have traditionally relied on OBIEE for standardized reporting across complex, multi-system data environments.

OBIEE and Oracle Analytics Cloud (OAC)

Oracle has shifted its investment toward Oracle Analytics Cloud (OAC), the cloud-native successor to on-premises OBIEE. Many organizations are currently evaluating migration paths from OBIEE to OAC or considering third-party modern analytics platforms as part of broader cloud transformation initiatives.

How Incorta Relates to OBIEE

Incorta is a modern data platform built for organizations that have outgrown the limitations of OBIEE and traditional BI tools. Where OBIEE relies on pre-aggregated data models and complex ETL pipelines, Incorta's Direct Data Mapping™ technology eliminates those layers entirely — delivering faster query performance directly against granular source data.

For Oracle customers specifically, Incorta provides native connectors to Oracle ERP, Oracle Fusion, and JD Edwards, making it a natural upgrade path from OBIEE. Finance and operations teams get the familiar ability to work with live, detailed data — without the performance bottlenecks and administrative overhead that come with maintaining OBIEE's semantic layer and aggregation pipelines.

If your organization is evaluating what comes next after OBIEE, Incorta is built to handle exactly that transition.

Also known as: OBIEE meaning | define OBIEE | OBIEE definition | what is OBIEE | Oracle OBIEE | OBIEE Oracle | Oracle Business Intelligence Enterprise Edition OBIEE

This post is part of Incorta's Innovate with Intelligence webinar series, a four-part exploration of agentic AI built for enterprise teams. From design patterns to evaluation to governance, each session tackles a different layer of what it takes to move AI from demo to production. Catch the full series here.

When organizations talk about AI security, the conversation usually centers on the model: Is it hallucinating? Could it be manipulated? What if it says something it shouldn't?

These are real concerns. But in Episode 4 of Incorta's 2026 AI webinar series, we made a more provocative argument: the model is not where your security problem starts - the data is.

Build proper data governance first, and AI safety becomes a much more tractable problem. Skip it, and no amount of model-level safeguarding will save you. Watch the full discussion here or keep reading for our step-by-step guide:

Before getting to solutions, it helps to name the problems clearly. Enterprise AI faces four major categories of risk:

Hallucination and Reliability: LLMs generate statistically plausible output, not verified truth. They can fabricate academic citations that look real, apply logic incorrectly to unfamiliar regulatory frameworks, or confidently produce wrong answers with no indication that anything is amiss. The impact: trust erosion and compliance risk.

The Black Box Problem: Traditional deep learning models lack transparent, traceable reasoning. The same question phrased slightly differently can produce a different answer with no clear explanation. This makes enterprise AI hard to audit, hard to debug, and nearly impossible to certify in high-stakes environments.

Contextual and Common Sense Gaps: Models rely on learned text patterns, not embodied understanding. Subtle rephrasing can trigger overcomplicated or incorrect reasoning. Performance outside tightly scoped workflows is fragile.

Prompt Injection and Security: This is the most acute risk for agentic systems. Models treat all text as potential instructions. They don't inherently distinguish between trusted system prompts and malicious user input. A carefully crafted document in a RAG pipeline can instruct the model to reveal sensitive data. A user who knows the pattern can attempt to override system instructions entirely.

Traditional AI models have a limited attack surface. They hallucinate, or they produce toxic output, both addressable with guardrails.

Agentic systems are fundamentally more exposed. Because agents can take actions, calling APIs, querying databases, triggering workflows, the consequences of a security failure aren't just a bad answer. They're a compromised system.

The threat landscape for enterprise AI agents includes prompt injection (malicious instructions embedded in user input or external data), goal hijacking (altering the objective the agent is working toward), tool and API manipulation (exploiting improperly scoped access), data poisoning (corrupting the information the agent retrieves), memory poisoning (tampering with the agent's stored context between sessions), privilege escalation (an agent assigned to the wrong access group gains more than it should), and cascading failure (in multi-agent systems, a compromised agent overwhelms others through excessive requests).

Despite this list, these risks are manageable with the right architecture.

The principle is straightforward. Before you can trust AI output, you need to trust the data it's reasoning over. If an agent has access to everything, it can expose everything. Governance at the data layer is what prevents that.

In Incorta's implementation, this means four interconnected pillars:

One underappreciated governance mechanism is the semantic layer, the intermediate layer between raw technical data and the business user experience.

Rather than exposing database tables directly to an AI agent, the semantic layer presents curated, labeled views. These views can be configured with clear column labels and descriptions in any language, explicit enablement or disablement of AI features per view, and metadata enrichment that helps the model understand business context rather than just schema structure.

This means governance isn't just about what data the agent can access. It's about how well the agent understands what it's looking at. Poorly labeled metadata leads to poor AI output. Well-governed metadata leads to answers that business users can trust.

Governance without visibility is incomplete. A secure AI deployment needs a full audit trail: who asked what, when, how the agent responded, and how users rated the experience.

This serves two purposes. First, it enables compliance. You can demonstrate exactly what the agent did and why. Second, it creates a feedback loop for improvement. Thumb-down signals, repeated questions, and session drop-offs all reveal where the agent is failing users, giving teams a prioritized list of what to fix.

The principle of least privilege, where every user and agent gets only the minimum access necessary to perform their task, applies at every layer. Continuous monitoring ensures that what's true today stays true as the system evolves.

The session's central argument: treating AI security as primarily a model problem is the wrong frame. Models can be tuned, guardrailed, and monitored, but if the underlying data is ungoverned, those efforts are built on sand.

The organizations that will deploy enterprise AI with confidence are the ones that treat data governance as a prerequisite, not an afterthought. Secure the foundation. Define clear access boundaries. Audit continuously. Then trust the AI to operate within those boundaries. Governance isn't something you build after the agent is ready - it's what makes the agent ready.

This post is part of Incorta's Innovate with Intelligence webinar series, a four-part exploration of agentic AI built for enterprise teams. From design patterns to evaluation to governance, each session tackles a different layer of what it takes to move AI from demo to production. Catch the full series here.

There's a moment every AI team knows well. The demo goes perfectly, the agent answers everything correctly, stakeholders are impressed... Then you deploy it.

Users start phrasing questions in unexpected ways. Edge cases appear that nobody thought to test. A prompt tweak that improves one query quietly breaks three others. And suddenly, the team is back to manually checking outputs, hoping nothing slipped through.

In Episode 3 we tackled this problem head-on: how do you move from vibes-based testing to a rigorous, systematic evaluation framework for enterprise AI?

Watch the full episode, or keep reading for our step-by-step approach.

Traditional software is deterministic. You write a test, it passes or fails, and you know exactly why. Evaluation is largely static.

Agentic AI is fundamentally different. The same question, phrased slightly differently, can produce a different answer with no clear explanation. A change that sharpens performance on finance queries might silently break supply chain queries. And you won't know until a user complains, or worse, until a wrong answer causes a real business problem.

The shift required isn't just technical. It's philosophical: stop treating evaluation as a manual chore and start treating it as a governed data workload.

The foundation of any serious evaluation framework is a golden dataset: a curated, governed set of test cases that lives in a structured table, not a loose CSV on someone's laptop.

But the contents of that dataset matter as much as the format. A stratified approach covers three layers:

Vague metrics like "helpfulness" are hard to act on. Instead, measure the technical relationships between data components:

1. Context Recall: Did the agent retrieve the correct rows from the database? If the right data isn't in the context, the answer can't be right, no matter how well the LLM reasons.

2. Faithfulness: Is every claim in the agent's response actually supported by the retrieved data? This is the anti-hallucination check. An agent that sounds confident while making things up is worse than one that admits uncertainty.

3. Answer Relevance: Did the agent answer the specific question asked, or did it just summarize the data broadly and call it done?

Track these three signals independently. When something fails, you'll know immediately whether the problem is in retrieval or in reasoning, which tells you exactly where to fix it.

You can't have humans review thousands of test outputs every time you update a prompt. The solution: use a highly capable LLM to evaluate your agent's outputs against a strict rubric.

A well-designed judge prompt produces a structured JSON response with both a numerical score and a reasoning string, converting qualitative text into hard integers you can aggregate, average, and graph over time.

Tools like Promptfoo offer out-of-the-box assertions for common metrics (factual accuracy, format validity, keyword presence, LLM-as-judge scoring) without requiring teams to build evaluation logic from scratch.

Here's where the architecture pays off. In a traditional setup, testing a prompt change means running evaluations, exporting to CSV, uploading to a BI tool, and waiting for a dashboard refresh. Context switching at every step.

When evaluation infrastructure lives in the same ecosystem as the agent (as Incorta's implementation does, using notebooks and dashboards on shared data), the loop collapses. Tweak a parameter, hit run, see the quality dashboard update instantly.

That speed of iteration is what separates teams that improve quickly from teams that stay stuck.

Evaluation results shouldn't sit in an isolated data table. They should be the pulse of the product: visible, queryable, and directly tied to decisions.

A well-designed performance dashboard answers the questions stakeholders actually care about. Is the new model accurate enough to justify its higher cost? Did the latest prompt change improve performance or introduce regressions? Which specific test cases are failing most frequently, and what does that tell us to fix next?

Critically, this visibility should require zero manual effort. Test suites run automatically, nightly, or triggered by any model deployment or semantic layer change. Results push to the dashboard automatically. The health you see always reflects the current state of the system.

There's a gap that even a rigorous golden dataset can't close: the difference between curated test inputs and real user behavior.

In the lab, inputs are clean and controlled. In production, users are messy, impatient, and unpredictable. Closing this gap requires online observability: watching what actually happens when real users interact with the agent.

The most valuable signals are often implicit. A user who interrupts the agent mid-generation is usually signaling a relevance failure. A user who asks the exact same question twice in a row didn't get what they needed the first time. High query volume paired with low active user count typically means people are getting stuck and retrying.

These silent signals are a rich source of data for improving agent performance. Every real-world interaction, including the ones the agent struggles with, is a high-value data point.

Feed those real-world failures back into your golden dataset. Use them to upgrade the system. This is the evaluation flywheel: production traffic doesn't just expose problems, it actively makes the agent smarter over time.

One research finding worth keeping front of mind: once a single agent crosses roughly 45% accuracy on a task, adding more agents to the system often makes overall performance worse. Errors cascade. Coordination overhead grows. System performance slips.

The implication: build simple, well-evaluated agents before layering complexity. Every added layer amplifies both the capability and the failure modes. Evaluation is what keeps you honest about which one is growing faster.

Deploying an AI agent is just the starting point. The teams that succeed in production are the ones that treat evaluation as a first-class engineering discipline: structured test cases, measurable metrics, automated scoring, continuous monitoring, and a feedback loop that turns real user behavior into system improvements.

This post is part of Incorta's Innovate with Intelligence webinar series, a four-part exploration of agentic AI built for enterprise teams. From design patterns to evaluation to governance, each session tackles a different layer of what it takes to move AI from demo to production. Catch the full series here.

The core argument is simple: almost every problem an AI team encounters has been seen before. Design patterns capture the accumulated wisdom of those who've solved these problems already - the best practices, the common pitfalls, the right tool for the right job.

The goal isn't to memorize patterns. It's to recognize which pattern fits the problem in front of you, and then benefit from everything that's already been figured out.

With that framing, here are the four patterns from this session.

Instead of writing one giant, monolithic prompt and hoping the LLM does everything correctly, prompt chaining breaks a complex problem into a sequence of smaller, focused subproblems - each with its own targeted prompt.

The output of each step feeds into the next, creating a pipeline.

Why it works:

Where it fits best: Information processing workflows with predefined steps: data extraction, validation pipelines, structured information retrieval from unstructured sources.

Where it falls short: It doesn't handle situations where the agent needs to make dynamic decisions or take different branches based on input. For that, you need the next pattern.

Routing is the pattern for adaptive, dynamic workflows. Rather than following a fixed sequence, the LLM evaluates the input and decides which path, tool, or pipeline to invoke next.

Think of it as the agent reading the situation and choosing the right response - not having the response hardcoded in advance.

Sample use cases:

Routing logic can take different forms: rule-based if/else logic, a secondary LLM that decides the path, or a trained ML classifier.

The key insight: routing is what makes an agent feel intelligent and responsive rather than mechanical.

When a workflow contains components that don't depend on each other's outputs, there's no reason to run them sequentially. Parallelization means executing those independent components at the same time, cutting latency significantly.

A simple example: if your pipeline needs to search two data sources and then combine the results, you don't need to search source one, wait, then search source two. Both searches can run simultaneously, and the final step combines their outputs once both are done.

Most agentic frameworks handle the orchestration automatically through asynchronous execution - you kick off the tasks and let the framework manage the timing.

The payoff: Faster pipelines, more responsive agents, better user experience, without changing any of the underlying logic.

Parallelization pairs naturally with chaining and routing to build workflows that are both sophisticated and efficient.

This is arguably the most transformative pattern of the four. Tool use enables LLMs to reach beyond their training data and interact with the outside world - databases, APIs, code execution environments, other agents, even physical systems.

Without tools, an LLM is limited to what it learned during training. With tools, it can:

How it works in practice:

This loop - decide, call, observe, decide - is what gives modern agents their real capability. Tool use isn't an add-on. It's what makes agents useful.

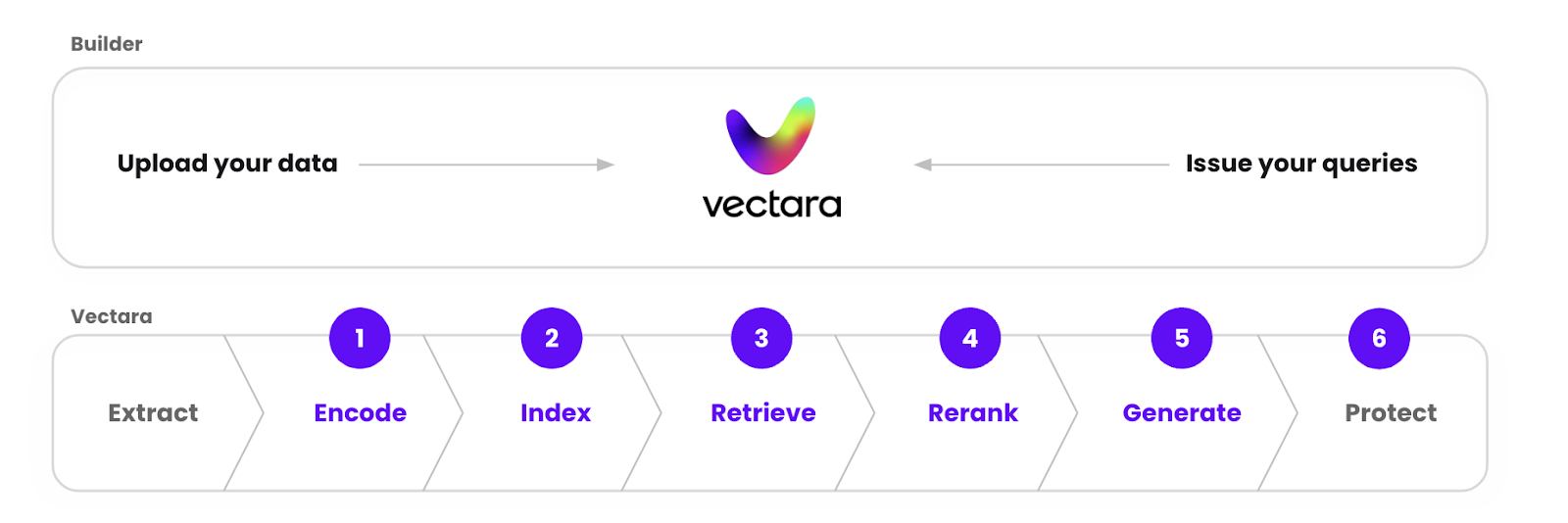

After the four patterns, Abd Rahman walked through Retrieval-Augmented Generation (RAG) - one of the most practical techniques for reducing hallucination in production environments.

The core problem RAG solves: LLMs don't know what they don't know. Ask them about your internal HR policy, your proprietary data, or a recent event, and they'll either hallucinate or admit ignorance. RAG fixes this by giving the LLM a trusted knowledge base to draw from at query time.

Offline (setup):

Online (at query time):

We showed a demo of a practical implementation: BI engineers save verified question-and-SQL pairs as reference anchors. When a business user asks a question, the system semantically matches it to the closest reference question - and uses the verified SQL query as the foundation for the answer.

Crucially, it's not a text match. When the same question was asked with a different filter (changing "Oregon" to "Washington"), the system recognized the semantic similarity, reused the reference query, and updated only the relevant filter - leaving everything else intact.

The result: business users get answers grounded in queries that a BI expert already validated, and trust is built in.

One subtle but important point: how you chunk documents significantly affects RAG quality. Blindly splitting at fixed word counts can break semantic context mid-thought — the model ends up with fragments that don't make sense in isolation. Smart chunking strategies (semantic boundaries, paragraph-aware splits) are essential for reliable retrieval.

These four patterns - prompt chaining, routing, parallelization, and tool use - are the building blocks of every production-grade agentic system. And RAG is the practical answer to one of enterprise AI's most persistent problems: getting an LLM to tell you the truth about your own data.

Everyone is talking about AI right now. Boards and CEOs want to see movement, and CIOs are under pressure to show something concrete.

We recently acquired Layout.dev, an AI application-building platform that’s designed to accelerate how organizations create applications and agentic workflows. For me, this isn’t about reacting to what’s happening right now. It’s about where things are going. Here’s how I think about it.

Business users don’t just want another dashboard. They want to connect to the systems they use every day, SAP, Workday, and ServiceNow, and get answers without waiting months for IT projects and pipelines.

For years, the interface moved from writing SQL to drag-and-drop. The next step is obvious. You type what you need, and the system builds it. You don’t click through screens. You say, “Connect these sources. Show me this. Add a filter. Build this for me.” That’s the direction.

That’s why Layout.dev matters. It changes how you interact with the platform.

But this is also where companies get into trouble.

AI works well when you’re drafting documents or generating code, because a human reviews the output. It breaks down when you try to run the core of the business on it.

If you can’t trust the system you use to close your books, how can you trust an automated system to act on your data?

Closing the books is the simplest test. Can you produce numbers you would report to the government? If the answer is no, adding AI won’t fix it.

AI is an accelerator. It makes you faster. But AI is trusted only if it works on trusted data. If the foundation isn’t solid, you’re just building faster on top of a problem.

If anything, the acquisition reflects a conviction I’ve held for years: AI will not fix a weak data foundation. It will only expose it.

Right now, companies move quickly to build demos and connect a model to some data. In two weeks, they can show something that at first glance looks impressive. That’s not the same as running a business on it.

When we talk about enterprise AI, it’s worth asking a simple question. Say that you’ve invested millions in your data platform Snowflake, Databricks, Fabric, whatever it is. These are smart systems built by smart engineers. But can you use any of it to close your books?

Closing the books requires numbers that reconcile. Numbers you would submit to the IRS. If your current system can’t reliably do that, then adding AI on top won’t magically fix the underlying problem.

“Good Enough?”

The first problem is the quality of the data.

Most enterprise data environments weren’t designed to operate directly on detailed, highly relational source data at scale. The engines underneath were built decades ago. ETL pipelines exist because those engines can’t handle the raw complexity of enterprise systems directly. So, the data gets simplified just to make the engine run. Once you do that, you’ve already lost part of the context.

When you reshape and aggregate data, you lose detail and eventually start to see mismatches. Sometimes they’re small and might be acceptable. But “good enough” won’t cut it in areas that don’t tolerate approximation like finance, supply chain, or banking.

That’s why many AI initiatives look promising at the surface but then stall when they reach the core of the business. Writing emails, drafting documents, and generating code — those are productive uses. A human still reviews the output, and the error tolerance is manageable.

But when you are reconciling invoices, matching contracts, validating currency rates, or recognizing revenue, the answers must be precise. If the underlying data is incomplete or simplified, the model will produce an answer anyway. If something is missing, it still gives you an answer. That’s a problem in finance.

Building a Foundation

The second problem is control. Large language models are generalists. They won’t understand your company’s specific financial rules or contract clauses. If you expose enterprise data to a model without constraining it, you’re relying on probability where determinism is required.

Our view at Incorta has always been that the foundation should come first. We operate directly on live enterprise data using Direct Data Mapping™. Instead of rebuilding everything into aggregates before it becomes usable, we work with the data as it exists in systems of record, such as SAP, Oracle, Workday, and others, preserving detail and relationships.

When we apply AI, we don’t just hand the question to an LLM and expect it to guess the right tables. Incorta first narrows the data down to the exact tables and columns. Then we ask the model to work inside that. That way, the model is operating within the real structure of your enterprise data, not inventing context.

This is exactly why Layout.dev matters.

For decades, the interface evolved from SQL to drag-and-drop dashboards. Now the interface is shifting again. Instead of building reports manually, users want to describe what they need to connect these systems, monitor these invoices, flag anomalies, trigger actions, and have the system build it.

Layout.dev gives us that interaction model. It allows business users and developers to define workflows and agents directly on top of live enterprise data.

But here’s the key: the interface can change without compromising the foundation. The easier it becomes to build an agent, the more important it is that the data it acts on is complete and governed.

Look at something simple like invoices. Today, validating an invoice means chasing it across systems, invoices, contracts, rate tables, and currency rules, and manually reconciling the pieces. If you have hundreds of them, it takes a lot of time. An agent can automate that. But if the underlying data doesn’t reconcile across systems, you’re automating error at scale.

AI is a multiplier. It multiplies precision, or it multiplies error. If the foundation is accurate and trusted, AI can increase speed and efficiency. If the foundation is fragmented or simplified beyond recognition, AI will expose those weaknesses more quickly.

So before making AI mandates across the enterprise, ask yourself whether your current data platform produces numbers you would confidently report to the government. If not, that is the place to focus first.

AI will absolutely change how we interact with systems. That’s why we invested in Layout.dev.

To learn more, read our official press release here.

This post is part of Incorta's Innovate with Intelligence webinar series, a four-part exploration of agentic AI built for enterprise teams. From design patterns to evaluation to governance, each session tackles a different layer of what it takes to move AI from demo to production. Catch the full series here.

Here's what they covered (and why it matters):

Large language models (LLMs) have come a long way from their early days as chatbots. While those first chatbot applications sparked widespread excitement in AI, they came with real limitations - narrow context windows, static training data, and an inability to interact with the world beyond generating text.

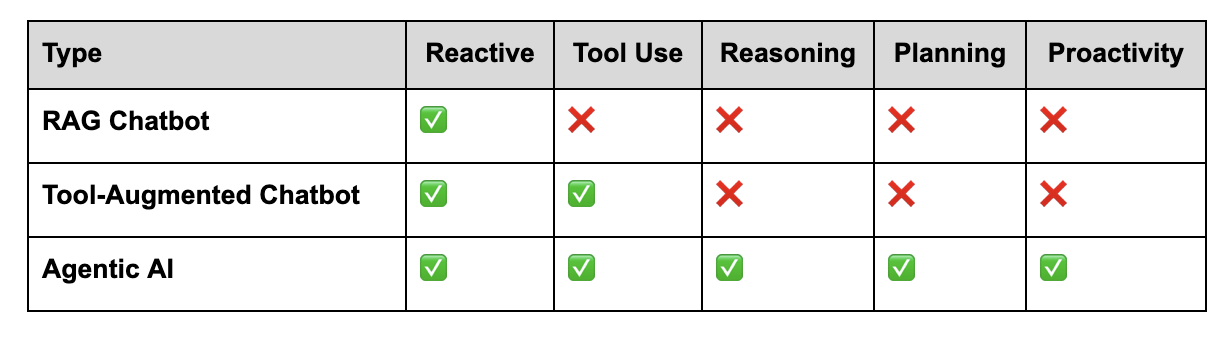

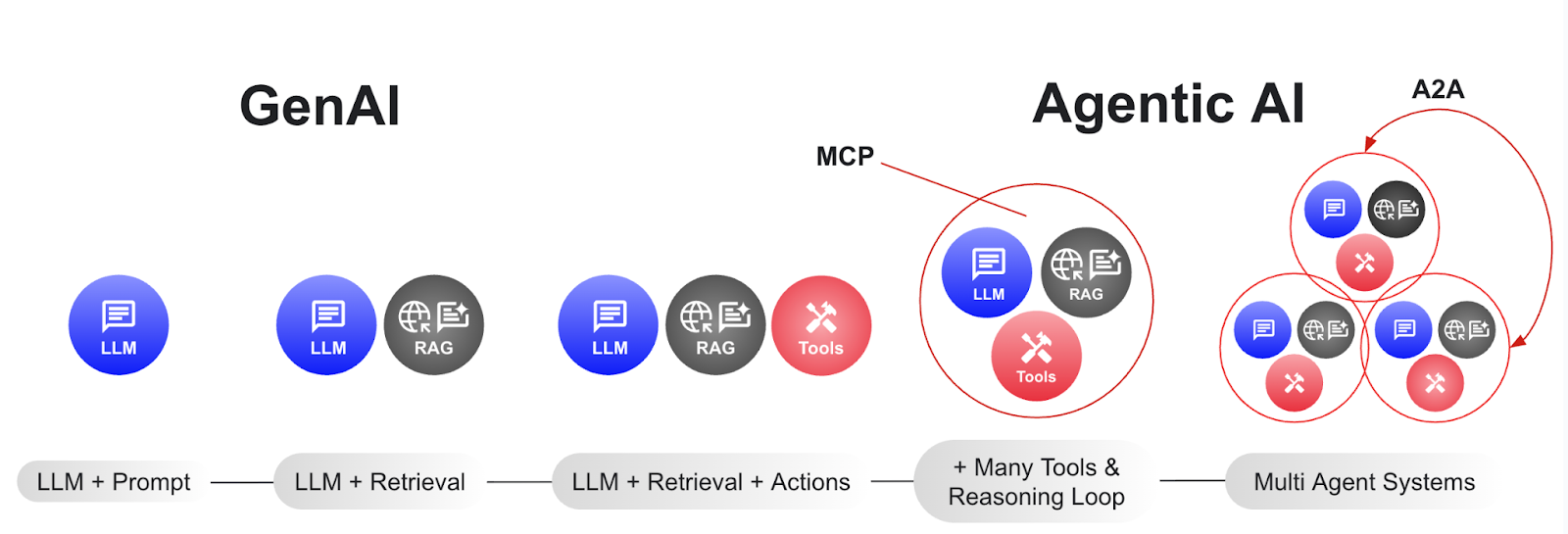

The evolution since then has moved through four distinct phases:

This fourth phase - agentic AI at scale - is where things get both powerful and complex. And that complexity is exactly why design patterns matter.

"When we get a new problem, we don't have to come up with a new architecture from scratch. We can just find the closest design pattern, use it, and benefit from all the wisdom of everyone who's applied it before."

Autonomy is the goal. But in the enterprise world - especially in finance, legal, and compliance - unchecked autonomy is a liability.

We frame the Human in the Loop (HITL) pattern simply: use it when the cost of an error is higher than the need for speed.

The pattern works like this:

This transforms the human's role from passive requester to active expert in the loop.

1. The Ambiguity CheckWhen a request is technically answerable but semantically vague — say, "show me total sales for my favorite product category" — rather than guessing (and potentially hallucinating), the agent surfaces an input prompt asking for clarification. Once the user responds, the workflow resumes with accuracy.

2. The Strategic Dead EndWhen an agent hits a genuine wall - like being asked for "the longest flight distance" in a retail dataset that contains no flight data - it doesn't crash or fabricate a number. It analyzes the situation, presents the user with strategic options (pivot the query, use a different dataset, cancel the request), and continues from where it stopped once a decision is made.

The key shift: the agent treats the human as a partner, not just a prompter.

Once trust and safety are handled by HITL, the next challenge is scale. And a single agent, no matter how capable, can't do everything.

Ask one agent to write code, do research, manage a project, and handle exceptions simultaneously - and you'll get confusion and mistakes. A bigger agent isn't the answer - it's a team of specialized ones.

Agent-to-Agent Communication (A2A) is the open standard protocol that makes this teamwork possible. Importantly, it's platform-agnostic: an agent built by one company can collaborate with an agent from a completely different company, regardless of which underlying AI model they use.

1. Discovery: Like looking up a phone book -before an agent asks for help, it queries a registry to find out which other agents are available.

2. Identity: Each agent shares an "agent card" - a structured introduction listing its name, capabilities, and the specific tasks it can perform.

3. Communication: Agents begin assigning tasks to each other and collaborating, either synchronously (for quick tasks) or asynchronously (for heavy, long-running jobs).

4. Security: Every agent interaction in an enterprise context is encrypted and logged, producing a full audit trail of who did what and when - essential for regulated industries.

Under the hood, A2A runs on standard JSON-RPC. A request is a simple, platform-independent message (e.g., "What are the total sales for bikes?"). The response isn't a simple chat reply - it's a structured data artifact that includes the answer and the evidence: the logic used, the SQL generated, and a full record of how the result was produced.

This matters for enterprise adoption: you're not locked into a single interface. Because the protocol is standard JSON, any application in your tech stack can programmatically access these agents.

These two patterns complement each other naturally. Human in the Loop ensures that trust and safety are never sacrificed for speed. Agent-to-Agent Communication ensures that complexity doesn't become a bottleneck for scale. Together, they form a foundation for agentic AI that enterprises can actually rely on.

The session's key takeaway: agentic AI is about designing systems that know when to pause, when to ask for help, and how to collaborate effectively.

The supply chain as we know it is dead. Supply chain tech has drastically shifted over decades from basic, reactive dashboards, to command centers with digital twins that help you decide what to do next, to fully autonomous platforms that make (and execute!) decisions on their own.

Gartner®' December 2025 report, "From Insights to Guided Actions: The Visibility Journey Toward Supply Chain Orchestration Platforms", maps out this evolution and what it means for supply chain leaders.

But here's what most leaders miss: none of these advanced capabilities work without the right data foundation. Most organizations simply aren’t ready.

We feel that Gartner® breaks down the evolution of supply chain technology into four major phases:

1. Business Intelligence (Early 2000s): Organizations built traditional data warehouses using mainly structured enterprise data. BI platforms provided insights through descriptive analytics (what happened) and diagnostic analytics (why it happened). While valuable, these systems were retrospective and couldn't enable real-time decision-making.

2. Control Towers (2010s): With the rise of IoT and near-real-time data, companies deployed domain-specific control towers focused on individual functions like logistics and transportation. These tools provided visibility and alerts but remained functionally siloed. They offered "see, understand, act, learn" capabilities within their narrow scope but couldn't support cross-functional decision making.

3. Command Centers (2020s): The evolution toward command centers represented a significant leap. These frameworks connect data from multiple internal and external sources, providing cross-functional insights. Crucially, they leverage a digital supply chain twin to enable simulation, optimization, and response capabilities. However, decisions and execution still remain human-driven.

4. Orchestration Platforms (2030 and beyond): The next frontier: supply chain orchestration platforms that go beyond insights to actually prescribe and perform decisions through existing operational systems. With capabilities like knowledge graphs, generative AI, and agentic AI, these platforms will automate decision-making, rather than just augmenting it.

At the heart of this evolution is the digital supply chain twin - defined by Gartner® as "a digital representation of the physical supply chain that can be used to create plans and make decisions."

And, according to Gartner®, true digital supply chain twin must include seven essential capabilities:

"A digital supply chain twin is neither a set of data tables in a data warehouse (based on structured data) nor a data lake (based on structured and unstructured data), nor a single data model in an SCP/SCM suite solution."

In other words, you can't fake it. You can't build a true digital twin on top of slow, batch-processed data or fragmented point solutions. You need real-time, granular operational data that's properly associated and continuously refreshed.

Most organizations have accumulated a patchwork of supply chain technologies: ERP systems, warehouse management systems, transportation management systems, planning tools, and various point solutions. Each generates valuable data, but that data typically lives in silos.

Traditional approaches to integration - extracting data from source systems, transforming it, and loading it into a data warehouse or lake - create unavoidable lag. By the time your data is ready for analysis, it no longer reflects current reality.

This lag becomes increasingly problematic as you move up the maturity curve:

Gartner® emphasizes that "it all starts with building the foundation: a consistent data and applications architecture." Without that foundation, investments in advanced analytics, AI, and orchestration capabilities will underdeliver.

Incorta's Direct Data Mapping™ technology delivers exactly what Gartner® identifies as essential - real-time, granular, connected operational data that can power digital twins, AI models, and autonomous decision-making.

Real-time data for real-time decisions: Incorta connects directly to your ERP, WMS, TMS, and other source systems, delivering live data without the lag that traditional ETL creates. This is the "real-time transactions and events from granular data" that Gartner® describes as foundational for digital supply chain twins.

Preserves relationships across your entire supply chain: Unlike traditional approaches that break apart your data during transformation, Incorta maintains the connections between orders, inventory, capacity, suppliers, and customers. This gives you the "correlations and configurations between planning, transaction, and event data" needed for command centers to simulate scenarios and optimize decisions.

AI-ready from day one: Advanced analytics and AI need detailed, granular transactions with full context - not pre-aggregated summaries. Incorta delivers exactly this, enabling the "probability distributions derived from transactional analysis" that Gartner® identifies as critical for digital twins.

Built to scale with your ambitions: Whether you're launching your first control tower or planning for autonomous orchestration, Incorta grows with you. Connect new data sources and expand capabilities without ripping and replacing your infrastructure - positioning you to adopt command centers, digital twins, and eventually autonomous orchestration as these capabilities mature.

If you're evaluating supply chain technology investments, Gartner® offers a useful lens for assessment:

Where are you today? Most organizations are somewhere between early-stage control towers and emerging command center initiatives. Few have truly integrated digital twins that span end-to-end operations.

What's holding you back? Often, the constraint isn't the lack of advanced analytics tools or AI capabilities: it's the quality, speed, and integration of underlying data. This can cause distrust in your data, and hesitation in making faster decisions.

What foundation do you need? Can you access real-time operational data? Can you associate that data across functions? Can you enrich it with external signals? If not, advanced tools will struggle to deliver any value.

How do you future-proof? Build a data foundation that supports your current needs, while enabling future capabilities. A platform that provides real-time access to all operational data, supports advanced analytics, AI, and can incorporate external data sources will serve you whether you're deploying your first Command Center or planning for autonomous orchestration.

Preparing today for the supply chain of tomorrow

Supply chain orchestration platforms are an ambitious vision Gartner® acknowledges is still "a prospective concept with considerable hype in the solution market for the time being."

Autonomous decision making and execution will require technology and organizational change: "Autonomous implementation of supply chain decisions will require reorganizing existing supply chain personas, processes, operating models, and technologies."

But organizations that invest today in modern data architectures that provide real-time, granular, connected operational data will be positioned to adopt advanced capabilities as they mature. Those that continue to build on legacy data infrastructure will find themselves constantly constrained by data lag, silos, and quality issues.

Understand exactly where your supply chain technology fits in the evolving landscape - and how to build the data foundation today you need to win tomorrow.

GARTNER is a registered trademark and service mark of Gartner, Inc. and/or its affiliates in the U.S. and internationally, and MAGIC QUADRANT is a registered trademark of Gartner, Inc. and/or its affiliates and are used herein with permission. All rights reserved.

Gartner does not endorse any vendor, product or service depicted in our research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.

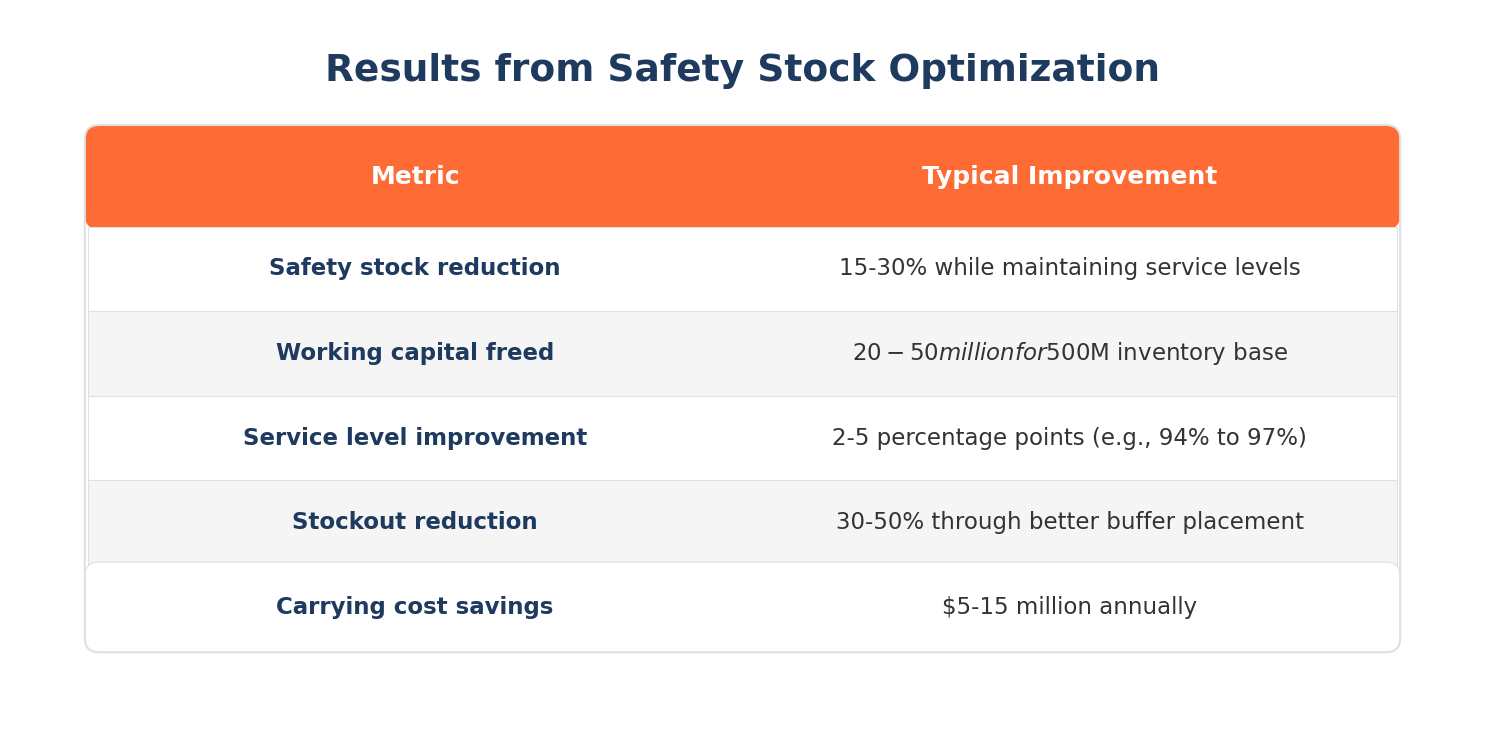

Safety stock is buffer inventory held to protect against demand variability and supply uncertainty. Most companies set safety stock once per quarter using outdated formulas, resulting in $20-50 million in unnecessary inventory for a typical $500 million supply chain. This guide explains how safety stock works, why traditional approaches fail, and how to optimize buffer inventory for today's volatile supply chains.

Safety stock (also called buffer stock or reserve inventory) is extra inventory held beyond expected demand to protect against uncertainty. It serves as insurance against:

Safety stock sits in addition to cycle stock (inventory needed to meet average demand between replenishments) and pipeline stock (inventory in transit).

Example: If you expect to sell 1,000 units during a 2-week lead time, you might hold 1,000 units of cycle stock plus 200 units of safety stock to cover potential demand spikes or delivery delays.

Traditional safety stock formulas account for demand variability and desired service level:

Safety Stock = Z × σ × √L

Where:

Safety Stock = Z × √(L × σd² + d² × σL²)

Where:

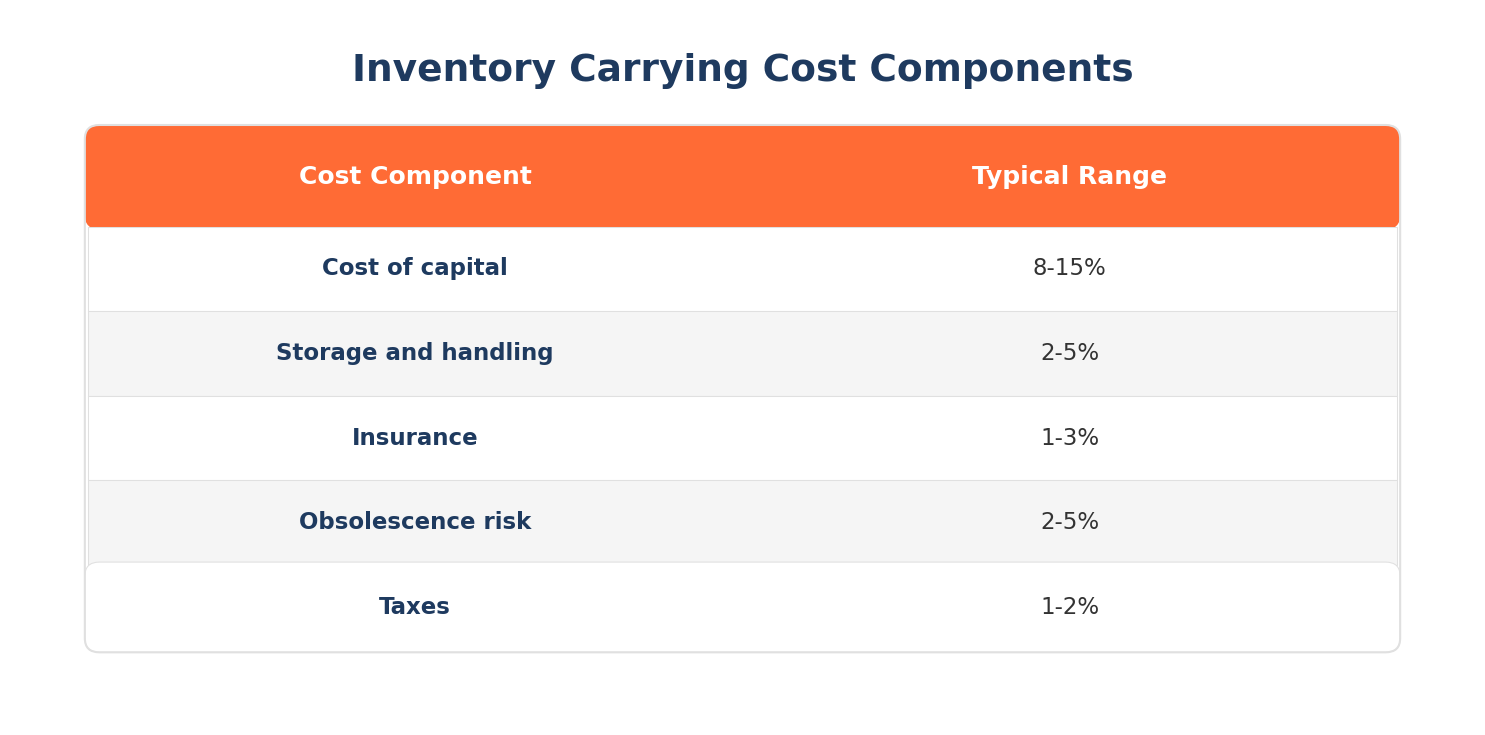

Carrying more safety stock than necessary creates significant costs:

Industry estimates put inventory carrying costs at 20-30% of inventory value annually, including:

Capital tied up in excess safety stock cannot be deployed on:

Most organizations calculate safety stock using formulas that were designed for stable, predictable supply chains. Today's reality is different.

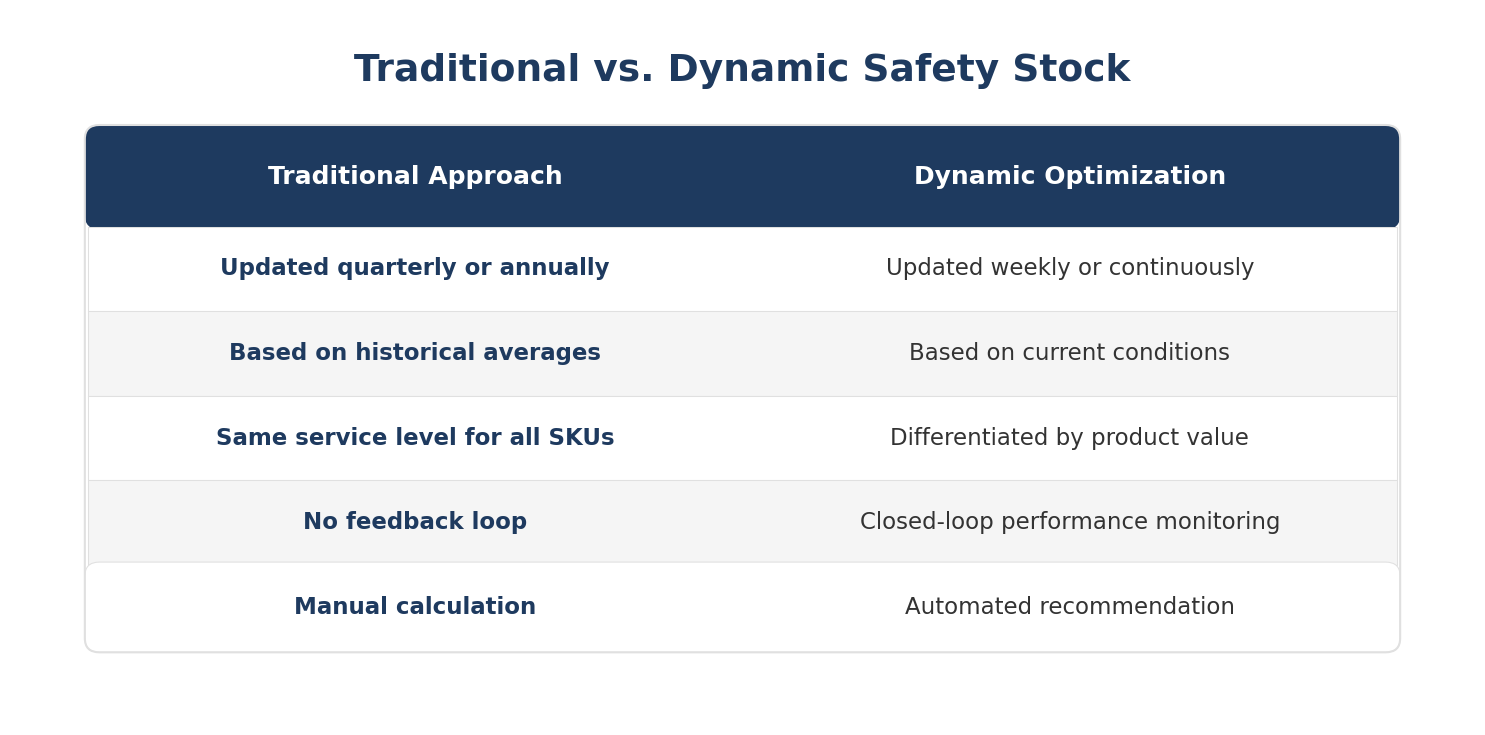

The issue: Most companies review and update safety stock quarterly or annually. But supply chain conditions change weekly.

The impact: A safety stock level set in January based on Q4 data may be completely wrong by March when demand patterns, supplier performance, and lead times have all shifted.

What's needed: Continuous recalibration based on current conditions, not periodic updates based on historical averages.

The issue: Safety stock formulas require inputs like demand variability and lead time variability. Most organizations calculate these from historical data that's months or years old.

The impact: If supplier reliability has improved (or degraded) in the past 6 months, your safety stock is calibrated to the wrong reality. If demand patterns have shifted due to market changes, your buffer is sized for a world that no longer exists.

What's needed: Real-time inputs that reflect current demand variability, current supplier performance, and current lead time patterns.

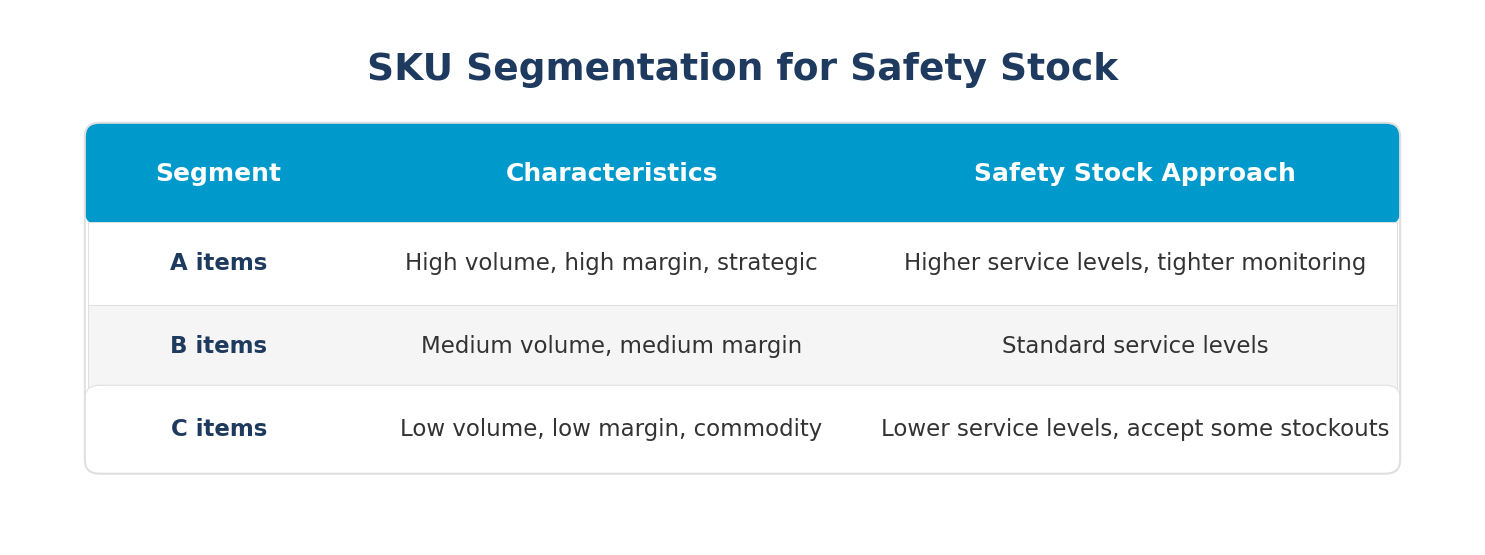

The issue: Many organizations apply the same service level target (e.g., 95%) across all SKUs, regardless of margin, strategic importance, or customer impact.

The impact: You over-invest in safety stock for low-margin commodities while under-investing for high-margin strategic products. You treat a $5 SKU the same as a $5,000 SKU.

What's needed: Differentiated service levels based on product value, margin, customer importance, and substitutability.

The issue: After setting safety stock, most organizations don't track whether those levels are actually achieving target service levels.

The impact: You might be carrying $10 million in excess buffer for products that never stockout, while under-buffered products experience chronic availability issues. Without feedback, you can't optimize.

What's needed: Closed-loop monitoring that connects safety stock levels to actual service level outcomes and adjusts accordingly.

Dynamic safety stock optimization continuously adjusts buffer levels based on current conditions rather than static historical formulas.

Dynamic optimization considers:

Demand signals:

Supply signals:

Business context:

Not all products deserve the same safety stock investment. Segment by:

Before optimizing, understand your baseline:

Look for:

Set up systems to track:

Move from manual quarterly reviews to automated recommendations:

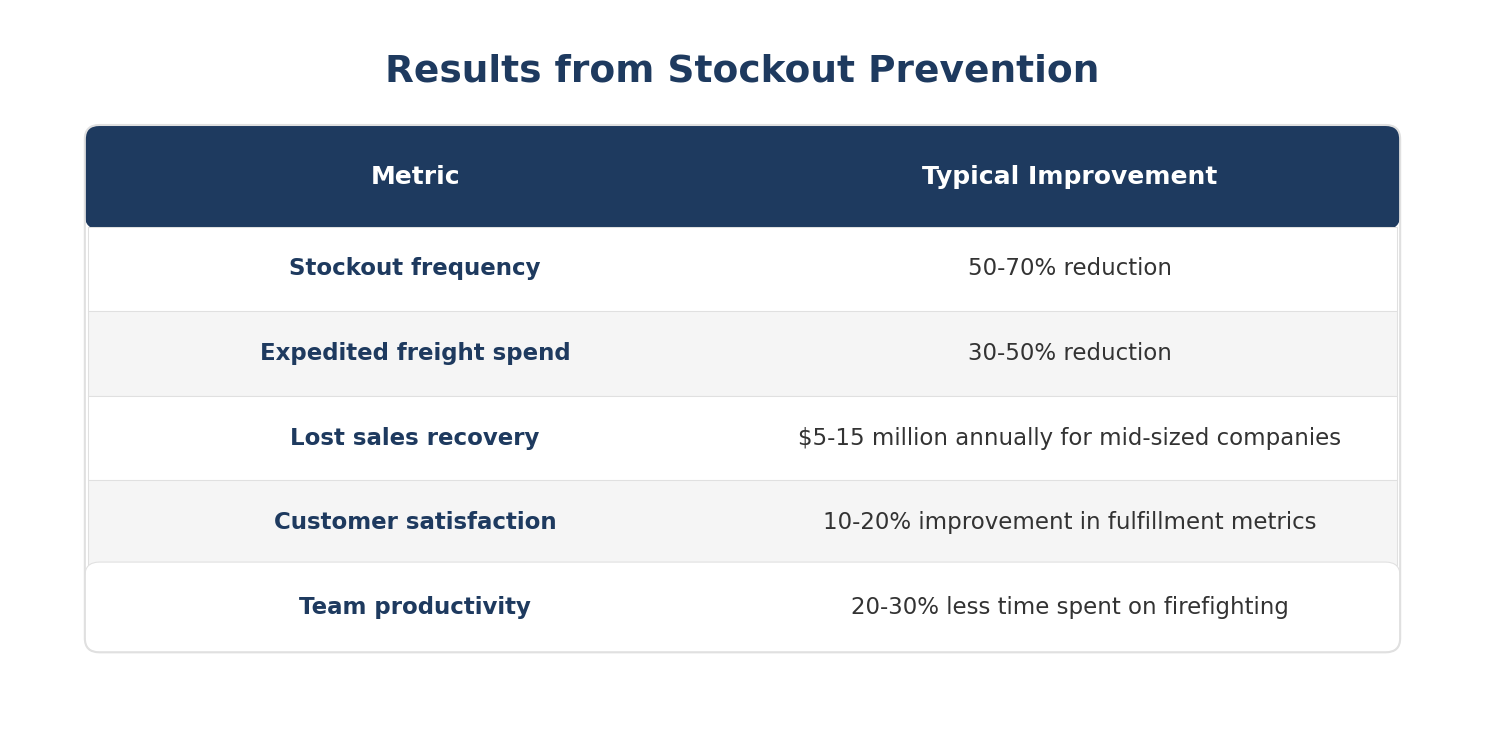

Organizations that implement dynamic safety stock optimization typically achieve:

The key insight: most companies can simultaneously reduce safety stock AND improve service levels because current buffers are poorly allocated, not because they need more inventory overall.

Incorta gives supply chain teams the real-time visibility and automated workflows needed to move from static safety stock formulas to dynamic optimization.

A live digital twin of your ERP. Incorta's Direct Data Mapping creates a unified, real-time digital twin of your entire ERP and related systems. Every inventory position, every demand signal, every supplier shipment is visible in its original granularity. You see actual current conditions, not historical averages.

Real-time inputs for safety stock calculations. Instead of calculating demand variability from last year's data, Incorta shows you current demand velocity and variability. Instead of assuming supplier lead times match contracts, you see actual performance. Your safety stock inputs reflect reality.

Dynamic recommendations. Incorta adapts safety stock recommendations to current demand variability, supplier reliability, and forecast accuracy. As conditions change, recommendations auto-adjust. You're not locked into quarterly formulas that don't reflect current reality.

Closed-loop performance monitoring. Connect safety stock levels to actual service level outcomes. See which SKUs are over-buffered (high safety stock, zero stockouts) and which are under-buffered (chronic availability issues). Optimize based on evidence, not assumptions.

Embedded workflows. When safety stock should be optimized, Incorta can trigger workflows that update replenishment rules. Recommendations become action without manual intervention or IT dependency.

The result: teams optimize buffer inventory based on current conditions, freeing working capital while improving service levels.

See how other supply chain leaders are already winning with Incorta here.

Stockouts occur when inventory runs out before replenishment arrives, leading to lost sales, expedited freight costs, and damaged customer relationships. Most companies experience 2-4 significant stockouts per quarter, but struggle to prevent them because they can't see the risk coming or understand root causes after the fact. This guide explains what causes stockouts, how to predict them, and how to build systems that prevent them.

A stockout (also called an out-of-stock or OOS) happens when a product is unavailable for sale or use because inventory has been depleted. Stockouts can occur at any point in the supply chain:

Stockouts are different from backorders. A stockout means the product is simply unavailable. A backorder means the customer can still place an order for future fulfillment.

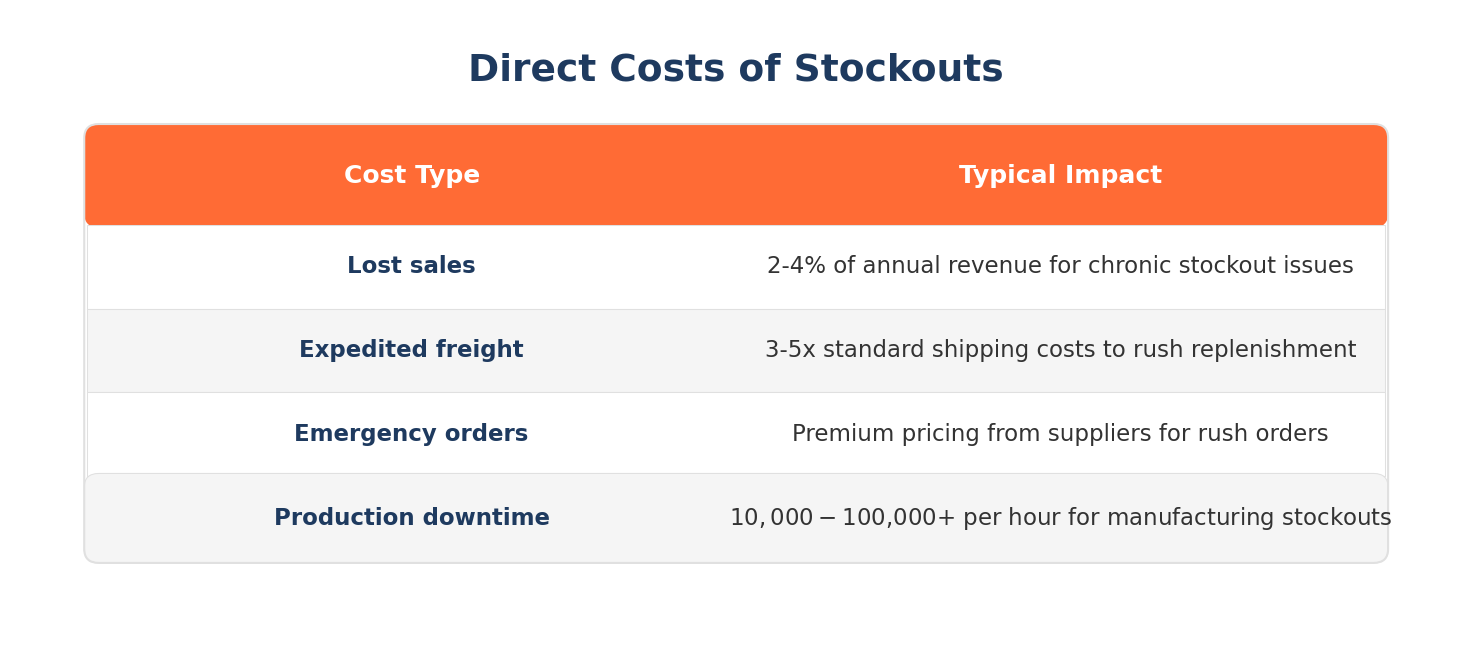

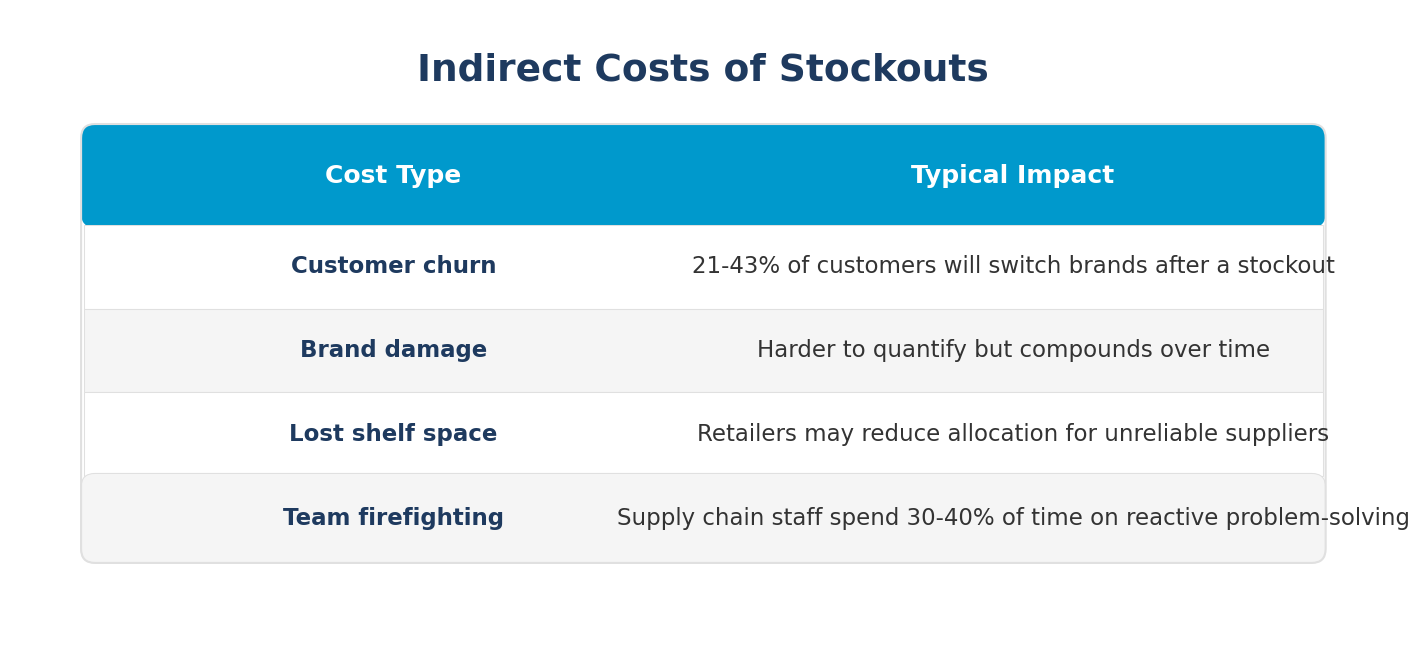

Stockouts create both direct and indirect costs:

Stockouts typically result from a combination of factors:

Demand forecast errors. Forecasts underestimate actual demand, leading to insufficient inventory. This is especially common during promotions, seasonal peaks, or when new products cannibalize existing SKUs.

Unexpected demand spikes. Viral social media moments, competitor stockouts, weather events, or news coverage can create sudden demand surges that outpace inventory.

Omnichannel complexity. Inventory allocated to one channel (retail stores) may not be visible or available to another channel (e-commerce), creating artificial stockouts.

Supplier delays. Late shipments, quality issues, or capacity constraints at suppliers push back replenishment timing.

Lead time variability. Actual lead times exceed planned lead times, causing inventory to deplete before replenishment arrives.

Transportation disruptions. Port congestion, carrier capacity issues, or logistics failures delay inbound shipments.

Safety stock set too low. Buffer inventory is insufficient to absorb demand or supply variability.